⚡ Key Takeaways

- Databricks is best for engineering-heavy teams building AI/ML pipelines, complex data engineering, and real-time streaming pipelines

- Choose Snowflake if: You prioritize a cloud-native data warehouse with near-zero management, where SQL analytics and seamless data storage are your primary BI workloads

- Qrvey sits on top of either Snowflake or Databricks, giving B2B SaaS teams a fully embedded, white-labeled analytics layer with data that never leaves their cloud, easing per-query costs that eat into their margins as their tenant base grows

Engineering leaders at growing SaaS companies revisit the Databricks vs Snowflake decision usually the moment query costs start compounding across tenants. (Or when the CFO visits you personally to discuss the data warehouse bill!)

The short answer: Snowflake wins on simplicity and SQL-native analytics; Databricks wins on flexibility and ML workloads. But neither answer is complete without understanding your data architecture.

Let’s break down where each platform wins, where it gets expensive fast, and what actually matters when your analytics layer needs to serve thousands of tenants without your cloud bill becoming a board-level conversation.

| Databricks | Snowflake | |

|---|---|---|

| Best For | Advanced analytics, AI/ML, unified data engineering, data science teams | Business intelligence, reporting, scalable cloud data warehousing |

| Stand Out Feature | Lakehouse architecture, native ML integration, open format support | Separation of compute/storage, instant scaling, zero management |

| Price | Usage-based, with tiered options for compute and storage | Usage-based, per-second billing, multiple editions |

| Pros | Flexible, powerful, supports multiple languages and formats, strong ML tools | Simple, fast, highly scalable, minimal admin, broad ecosystem |

| Cons | Steeper learning curve, may require more engineering resources | Limited advanced ML, less flexible for custom data engineering |

| Customer Support | Enterprise support, community forums, dedicated account managers | 24/7 support, extensive documentation, active user community |

| Data Sources | Delta Lake for ACID transactions | Secure data sharing across clouds |

| Ease of Use | Collaborative notebooks | Automatic clustering |

| Integrations | MLflow integration | Time travel for data |

| Other Niche-relevant Features | Real-time streaming analytics | Native semi-structured data support |

Who Is Databricks Best For?

Databricks was created by the original authors of Apache Spark. That heritage matters because Databricks is, at its core, a large-scale distributed compute engine that expanded into warehousing.

Databricks is the right fit for teams that need to:

- Run complex data pipelines across petabyte-scale datasets using Apache Spark

- Process unstructured data alongside structured tables e.g.logs, raw event streams, images

- Unify batch and real-time Event Stream Processing without stitching separate tools together

- Give data engineers and ML engineers full programmatic control in Python, SQL, Scala, or R

For Whom Is Snowflake Useful?

Snowflake started as a cloud-native data warehouse and has expanded into data engineering and AI. Its core strength is still what it was originally built for: fast, governed SQL analytics with almost no infrastructure overhead.

Snowflake is a strong fit for teams that need to:

- Run high-concurrency BI workloads and SQL analytics without managing clusters

- Share data securely across clouds, regions, or partners via the Snowflake Marketplace

- Store and query semi-structured data like JSON natively without pre-defining schemas

- Scale compute instantly using virtual warehouses sized XS through 6XL

Price

Both Databricks and Snowflake use consumption-based models but the way they calculate processing units differs.

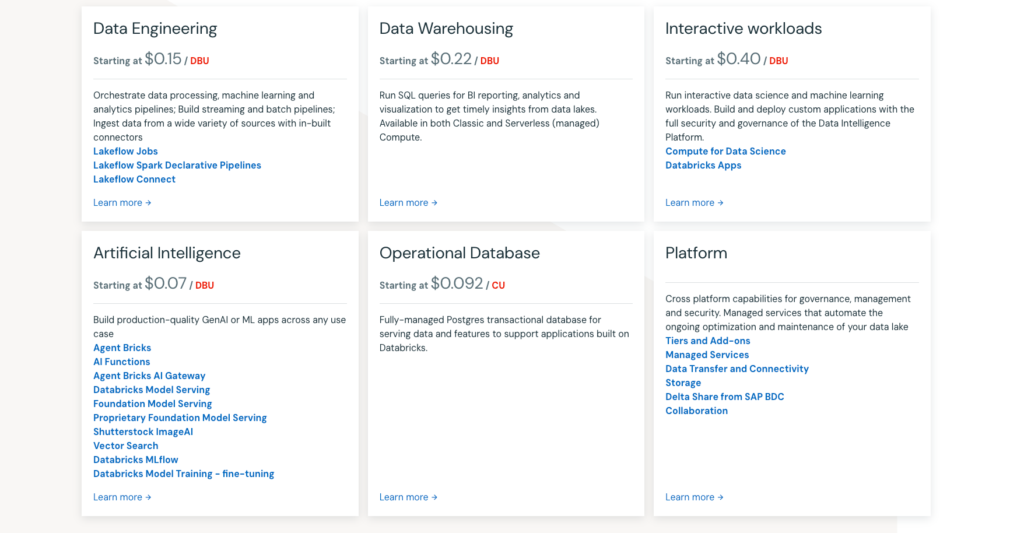

Databricks

Databricks charges using Databricks Units (DBUs), a unit of processing capability per hour, billed per second. The DBU rate varies by compute type, pricing tier, and cloud provider.

Key pricing realities:

- Jobs Compute starts at roughly $0.15/DBU on the Standard tier (AWS, US)

- All-Purpose Compute (used for interactive notebooks) runs $0.40–$0.55/DBU. That’s often more expensive than running the same workload as an automated job

- Two separate bills: Databricks charges DBUs, and your cloud provider (AWS, Azure, GCP) charges separately for the underlying VMs and storage

- You can save money by using “spot” instances for non-critical streaming pipelines.

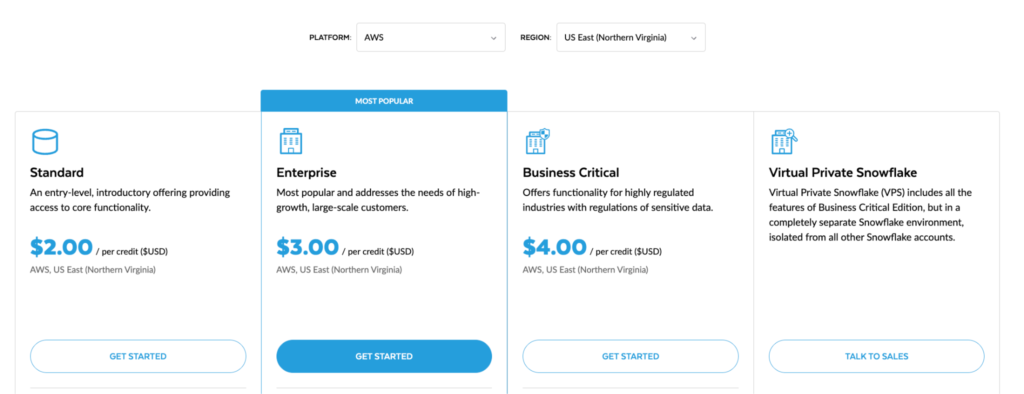

Snowflake

Snowflake uses a credit-based system. You consume credits whenever a virtual warehouse is running, billed by the second, with a 60-second minimum at startup.

- Standard edition: ~$2/credit. Enterprise: ~$3/credit. Business Critical: ~$4/credit.

- Virtual warehouses range from X-Small (1 credit/hour) to 6X-Large (512 credits/hour), doubling at each tier

- Storage is billed separately at approximately $23/TB/month on AWS US regions

- Medium-sized teams with regular ETL operations often land in the $2,000–$10,000/month range. Large enterprises easily exceed $50,000/month.

Verdict

Databricks is often cheaper for massive big data analytics because you control the cluster sizing. Snowflake is more predictable for standard BI workloads due to its auto-scaling nature. Always verify using vendors’ official calculator and talk to their sales team before committing.

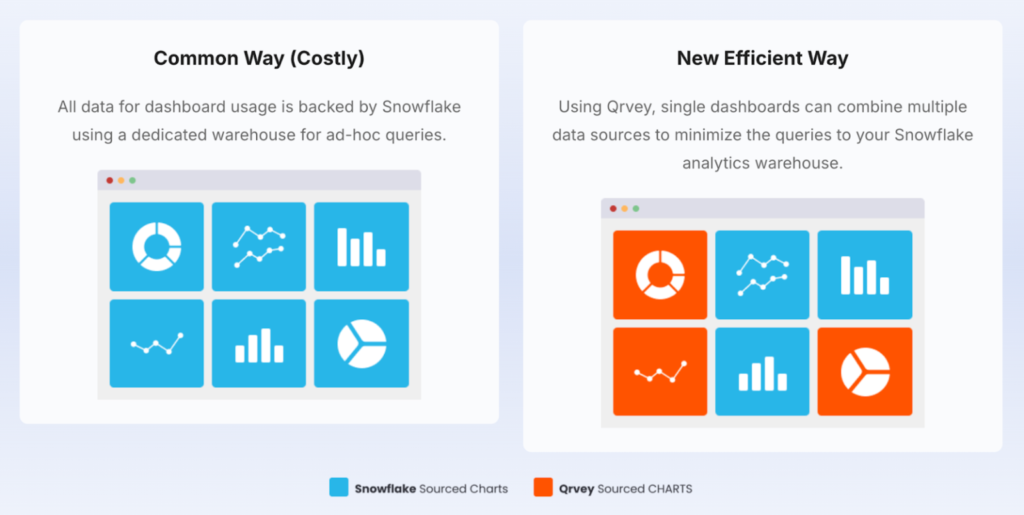

This lets you serve thousands of end-users with high-performance embedded data visualization without spinning up expensive virtual warehouses for every chart refresh.

Ease of Use

In a multi-tenant environment, “easy to use” means the difference between a seamless, automated onboarding process and a manual migration project for every new enterprise customer you sign.

Databricks

Databricks rewards engineering expertise as it’s code-centric and assumes familiarity with Python, SQL, or Scala.

- Collaborative notebooks: Real-time shared editing, great for exploration and experimentation

- Multi-language support: Python, SQL, Scala, R all in one workspace

- Cluster Management: You control cluster sizing, cluster resizing, and auto-scaling. More flexibility but also more decisions that directly affect your bill

- IDE integrations: VS Code, JetBrains, Jupyter; engineers feel at home

Snowflake

Snowflake’s design philosophy is: remove infrastructure complexity so analysts can focus on data.

- SQL-first interface: Clean web editor, no clusters to configure

- Automatic clustering and auto-suspend: Warehouses scale up when demand spikes and pause when idle

- Snowsight: Snowflake’s modern UI makes result exploration accessible to non-engineers

- Snowpark API: Allows developers to write Python or Java code that runs natively inside Snowflake, bridging the gap with Data Science.

The trade-off: less control means less customizability. If you need to customize execution behavior or process unstructured data at scale, Snowflake’s abstraction layer may become a ceiling.

Verdict

Snowflake wins on time-to-market because it functions like a true SaaS product: zero-management and ready for SQL analytics on day one. Databricks is built for engineering-heavy teams that want full control.

Customer Support

Both vendors are Gartner Magic Quadrant leaders, and their support reflects that. For a scaling SaaS, this means fewer blocked migration projects and faster resolution for complex data engineering hurdles that would otherwise stall your product roadmap.

Databricks

- Community Edition includes documentation and community forum access

- Premium tier adds technical support with defined SLAs

- Enterprise tier includes dedicated account managers and priority support channels

- Strong official documentation and active community forums

- Hands-on onboarding support increases with contract size

Snowflake

- 24/7 support available on Enterprise and Business Critical editions

- Extensive official documentation and one of the most active community forums in the data space

- Premier Support tier includes dedicated technical account managers

- Training through Snowflake University with structured learning paths and hands-on labs

Verdict

Both platforms offer solid enterprise support at higher tiers. Snowflake has a slight edge for round-the-clock availability. Databricks support tends to be stronger for engineering-focused accounts with dedicated relationships.

Integrations

True scale requires a platform that natively talks to your multi-tenant databases and cloud object storage layers.

Databricks

Databricks integrates deeply across the modern data engineering and AI stack:

- Apache Spark: the compute foundation; native compatibility with Spark-based tools

- Delta Lake and Delta Live Tables: built-in ACID transactions, managed pipelines, and CDC

- Unity Catalog: unified data governance across structured data, files, ML models, and AI assets

- Managed MLflow: experiment tracking, model registry, and deployment in one tool

- Apache Iceberg and Apache Hudi: open Data Lake Table Formats for cross-platform interoperability

- Event Stream Processing via Spark Structured Streaming: native real-time ingestion from Kafka, Kinesis, Event Hub

- Downstream connectors to Power BI, Tableau, and other BI dashboards

Snowflake

Snowflake’s integration ecosystem is broad and strategically built around data sharing:

- Snowflake Marketplace: access third-party data sets and apps directly within your account

- Snowpark API: write Python, Java, or Scala transformations that execute inside Snowflake’s engine

- Polaris Catalog: open-source Apache Iceberg catalog implementation for cross-platform interoperability

- Power BI, Tableau, Looker, Qrvey: first-class connectors for reporting and data visualization

- ETL tools: dbt, Fivetran, Airbyte all have native Snowflake connectors

Verdict

Databricks integrates more deeply into the ML and data science toolchain. Snowflake integrates more broadly with analytics, reporting, and third-party cloud platforms.

If your stack has heavy ETL operations and downstream BI reporting, Snowflake’s ecosystem friction is lower. If you’re building AI pipelines and need a unified governance layer for models and data together, Databricks wins.

Architecture and Data Model

For a CTO building a multi-tenant application, this architecture determines your margin. Choosing between a shared-disk or Lakehouse architecture captures how you’ll isolate tenant data & manage cluster resizing without service interruptions as you scale to thousands of users.

Databricks

Databricks pioneered the Lakehouse architecture, a design that combines the flexibility of Data Lakes with the reliability and performance of data warehouses.

Here’s what that means under the hood:

- Data stays in your cloud object storage (S3, ADLS, GCS) in open Parquet-based formats via Delta Lake.

- Delta Live Tables handle declarative data pipelines with built-in quality enforcement and change data capture (CDC), enabling A/B Testing of pipeline logic without manual wiring

- The Databricks Data Intelligence Platform is built on open formats, open source, and an open Data Lake catalog. Your architecture belongs to you, not Databricks’ roadmap.

- Cluster Management gives teams full control of cluster sizing and cluster resizing but this also means misconfigured clusters are one of the most common sources of runaway costs

Snowflake

Snowflake’s architecture is a proprietary three-layer cloud-native data warehouse: a storage layer, a compute layer (virtual warehouses), and a cloud services layer handling query optimization and metadata.

What this means in practice:

- Workload isolation is a built-in design choice: a runaway query in one warehouse won’t affect others, which matters a lot in high-concurrency environments

- Micro-partitioning: Snowflake automatically organizes data into small, compressed partitions and uses metadata pruning to skip irrelevant data during queries.

- Snowflake Data Warehouse handles semi-structured data (JSON, Avro, Parquet) natively via the VARIANT data type, no schema pre-definition needed

- Encryption at rest and end-to-end secure connection are built in at every tier

Verdict

For a data lakehouse supporting AI/ML workloads where you want full control over your storage layer, Databricks is the stronger architectural choice. For a governed, high-concurrency Cloud Database Management System that analysts use directly, Snowflake’s architecture is cleaner and lower-maintenance.

AI and Machine Learning Capabilities

For B2B SaaS, artificial intelligence is the ultimate monetization lever. If your cloud data platform can’t natively support Managed MLflow or Event Stream Processing, you’re leaving expansion revenue on the table while your competitors ship automated, data-driven insights.

Databricks

Databricks has native, deep ML support, arguably its biggest differentiator:

- Managed MLflow: Integrated experiment tracking, model registry, and one-click deployment for machine learning models

- Mosaic AI: Covers the full generative AI models lifecycle: training, fine-tuning, vector storage, and real-time inference

- AI/BI Genie: Natural language interface for big data analytics, designed for analysts who don’t want to write SQL

- GPU cluster support: Run model training directly in the same platform as your data pipelines, no context switching

Snowflake

Snowflake has invested heavily in AI capabilities, approaching it from the data warehouse side:

- Cortex AI: Brings AI inference directly inside Snowflake without data movement, using LLMs via SQL

- Snowpark API: Run Python or Java ML code inside Snowflake’s engine, keeping data in place

- Native support for calling external LLMs (OpenAI, Mistral) directly from SQL

- Strong for data science use cases where the data already lives in Snowflake and you want to avoid extract-transform-load cycles

- Less suited for custom model training at scale or GPU-heavy workloads

See how to build charts using AI with Qrvey in this clickable demo.

Verdict

Databricks leads on end-to-end ML/AI development and model training. Snowflake leads on operationalizing AI within existing SQL workflows without adding new infrastructure. If you want AI-assisted analytics on top of your existing data warehouse, Snowflake is simpler to start.

How to Choose the Best Cloud Data Platform for Embedded Analytics

Are you delivering scalable multi-tenant analytics to your customers?

This is the question that Databricks and Snowflake weren’t designed to answer. Both platforms can effectively centralize and process your company’s data but neither was built to serve analytics directly to thousands of your end customers, isolated by tenant, embedded inside your product.

If your roadmap includes customer-facing dashboards, self-service reporting, or embedded insights inside your SaaS application, you’re looking at a problem that sits above the data layer.

To avoid a costly migration project later, you must evaluate whether the platform handles multi-tenant data isolation and high-frequency concurrent queries well.

Here are the three important questions:

1. What Does Your Team Build Day-to-Day?

If your team’s daily work centers on data engineering, ML pipelines, and data science experimentation, Databricks usually wins. If it’s SQL analytics, concurrent reporting, and governed data sharing, Snowflake typically fits better.

But neither is a replacement for a dedicated multi-tenant analytics platform. Use Qrvey to bridge the gap, connecting directly to your warehouse to deliver secure, white-labeled dashboards to your end users.

2. How Much Engineering Overhead Can You Absorb?

Choosing Databricks means committing to ongoing cluster management, while Snowflake offers a hands-off cloud data platform at a premium price point. The real cost is the opportunity cost of your developers acting as data plumbers.

If analytics requests are eating your roadmap, it’s a sign to shift the burden. Qrvey’s multi-tenant analytics platform enables end-user self-service, ensuring your engineers spend their cycles on innovation, not support tickets.

3. Who Are You Serving?

Databricks and Snowflake are both strong answers to: “How do we build our internal data infrastructure?” Neither is a complete answer to: “How do we put powerful analytics in the hands of our customers inside our product?“

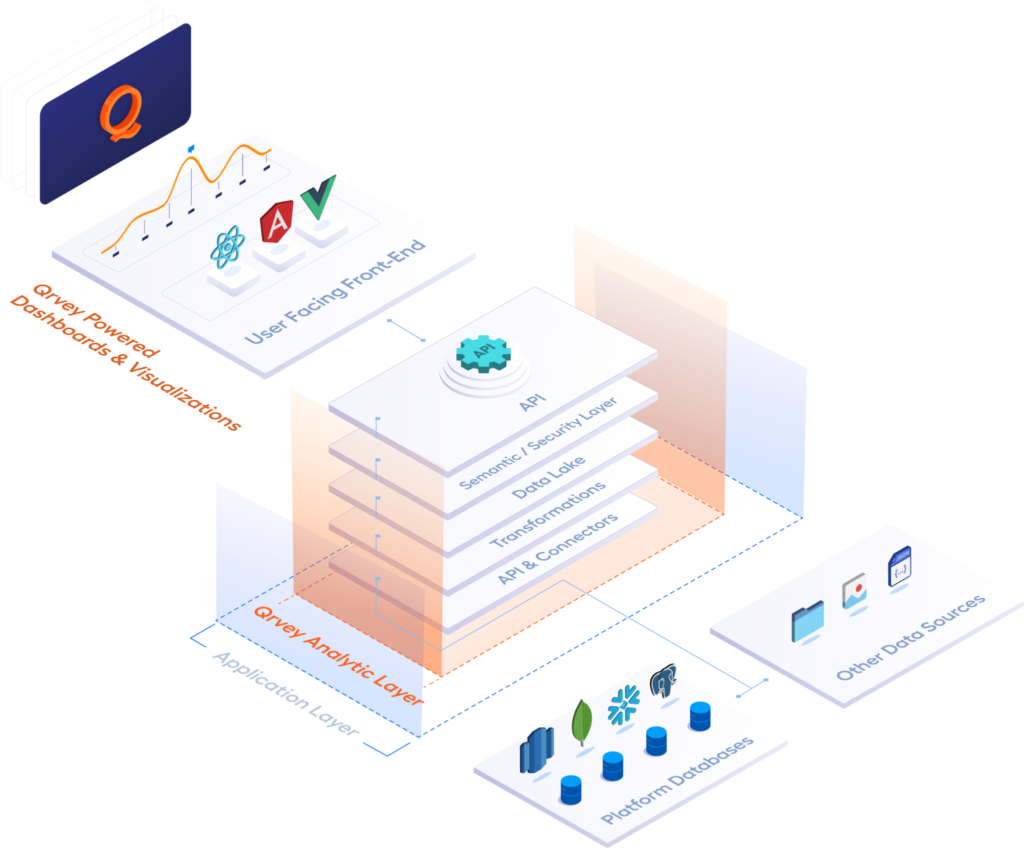

For SaaS providers building customer-facing analytics, Qrvey is purpose-built for that challenge. It’s designed to work on top of either Databricks or Snowflake, not instead of them. Your warehouse handles the data layer. Qrvey handles the analytics and embedded front-end layer your tenants actually interact with.

Qrvey deploys in your cloud, inherits your security model, and gives each of your tenants an isolated analytics experience, without your engineering team building it from scratch.

Whether your data lives in Databricks Delta Lake or a Snowflake virtual warehouse, Qrvey connects directly and serves it out as white-labeled, tenant-scoped dashboards inside your product.

The alternative, building it yourself, typically takes 10x longer and carries an ongoing maintenance burden that pulls engineering away from your core product. This is a compound cost with every sprint that you can avoid by embedding a purpose-built solution.

VIDEO: Build vs. Buy Analytics? Four key factors for your decision.

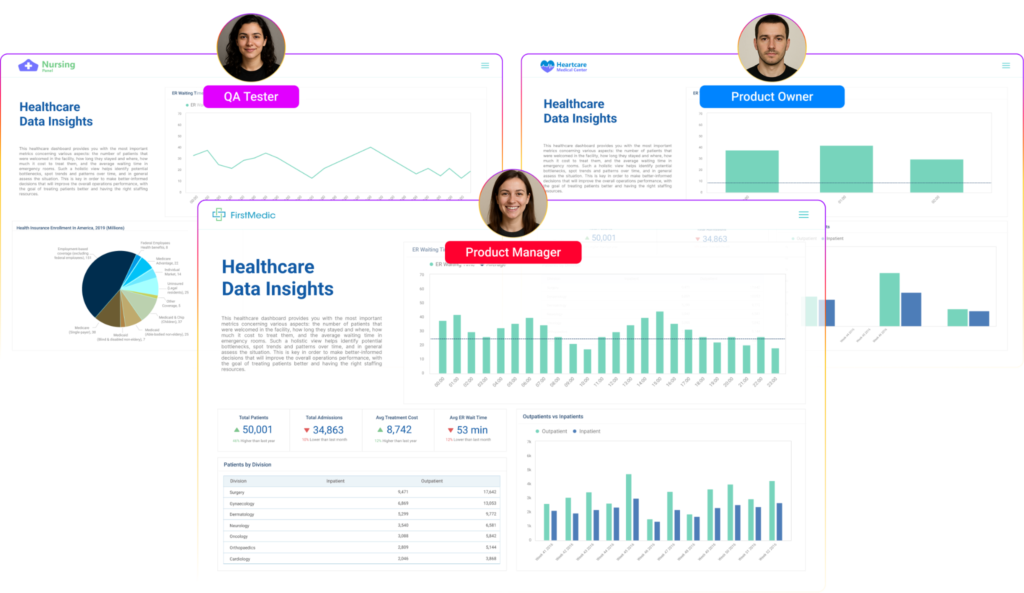

Qrvey: A Complementary and Standalone Alternative to Databricks and Snowflake

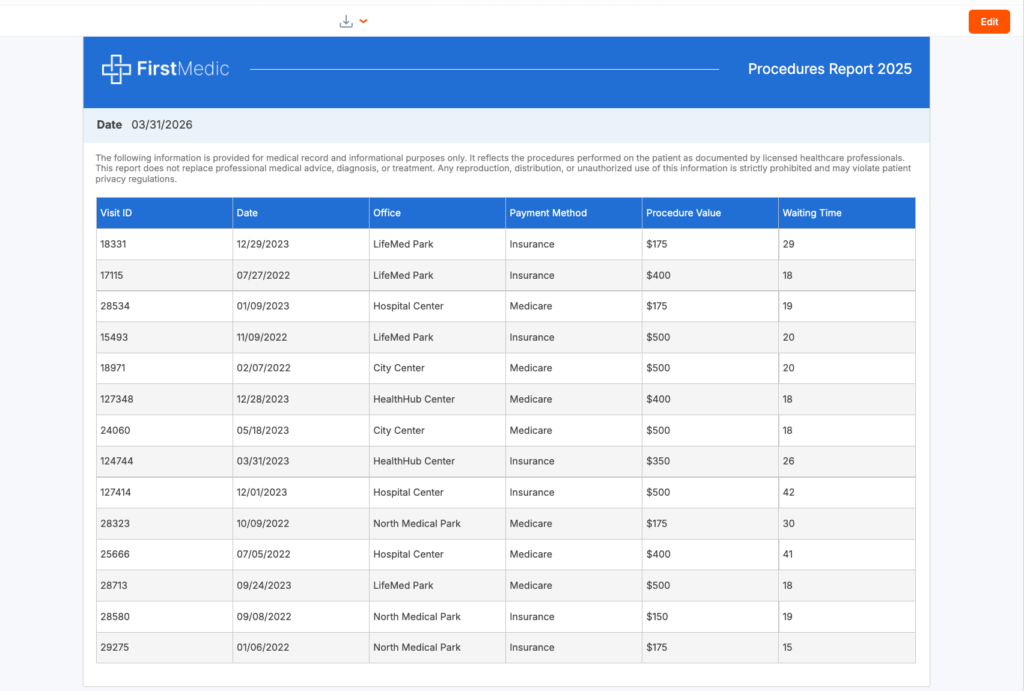

If you’re a B2B SaaS company building analytics for your customers, Qrvey plays a fundamentally different role than Databricks or Snowflake. Our SaaS customers use either solution in conjunction with Qrvey for efficiency gains and cost savings.

Databricks and Snowflake are excellent for centralizing, transforming, and governing your company’s data. Qrvey sits on top of either platform and turns that data into a customer-facing analytics experience embedded inside your product, serving every tenant independently.

Many Qrvey customers use Snowflake or Databricks as their data layer and Qrvey as their embedded analytics and front-end layer. This means they get the best of both: enterprise-grade data infrastructure and a purpose-built multi-tenant analytics experience without building it from scratch.

That said, for SaaS teams whose primary use case is embedded analytics, not general-purpose data management or large-scale ETL, Qrvey can also serve as a complete standalone solution.

Qrvey includes its own data engine, which means you can reduce the number of tools in your stack and meaningfully lower your total cost of ownership. If you’re not running petabyte-scale data science pipelines or complex cross-cloud data sharing, you may not need both layers at all.

Key Features

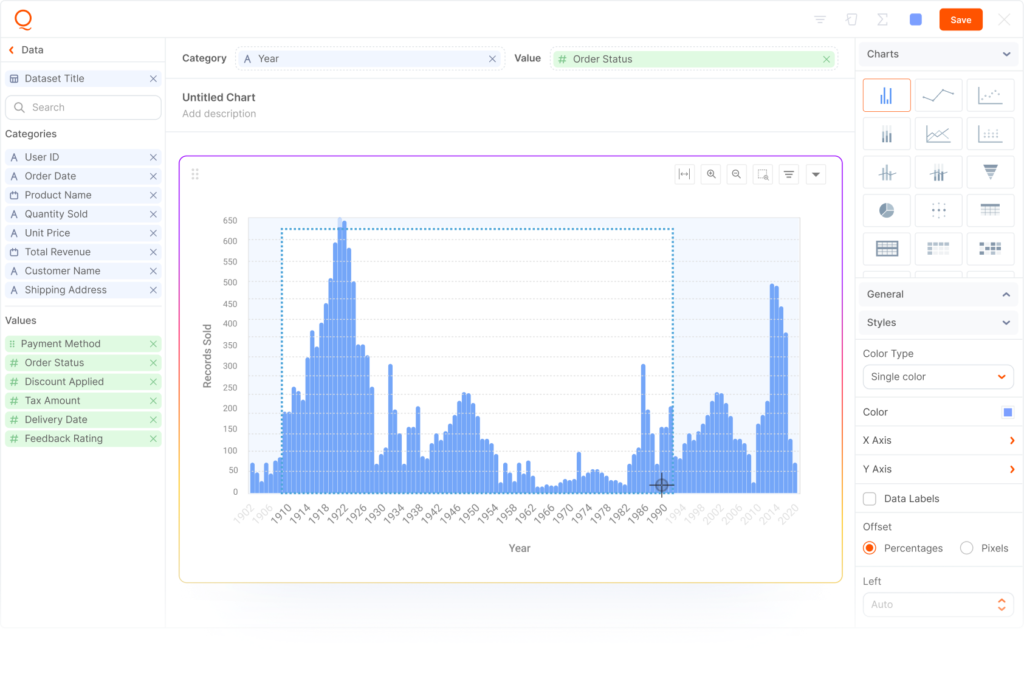

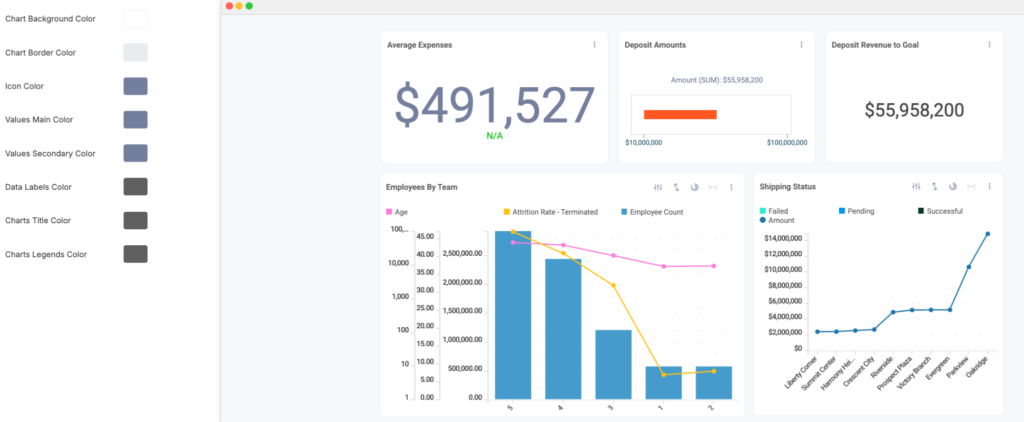

Fully Embedded Self-Service Dashboards

Your customers build their own dashboards, charts, and reports inside your product, using an embedded drag-and-drop builder, no SQL required. Each tenant gets their own isolated analytics environment which you embed via JavaScript & control every pixel of the experience.

Native Multi-Tenant Architecture with Security Token Auth

Qrvey doesn’t require you to create users in the platform or duplicate your security model. Instead, it generates security tokens on the fly that inherit permissions directly from your application. Row-level, column-level, and schema-level security all flow through automatically.

At scale, say 1,000 tenants, each with different data access rules, you’re not maintaining 1,000 separate security configurations. The token model handles it.

That’s a meaningful engineering hours reduction compared to building this in-house.

Take a peek at how to Set up Record Level Security in Qrvey in this clickable demo.

AI Chart Builder and AI Insights

Qrvey’s AI Chart Builder lets end users type a natural language question and get a visualization on their dashboard, without touching SQL or opening a ticket.

Smart Analyzer lets them ask follow-up questions about any existing chart: trends, anomalies, comparisons. It runs against live filtered data, not static summaries.

This is what modern data visualization looks like when it’s built for end users, not engineers.

No-Code Workflow Automation

Qrvey’s automation builder lets product teams (or their customers) create data-triggered workflows without writing code.

Send a Slack alert when a metric crosses a threshold, trigger a webhook when a tenant’s key number changes, push a pixel-perfect report via email every Monday.

This is the kind of feature that increases product stickiness without adding engineering scope.

Pricing

Qrvey offers transparent, scalable pricing tailored to SaaS providers as either subscription-based licensing or a perpetual license. Contact Qrvey to request pricing, deployment preferences, and feature needs. Plans are available for startups to large enterprises.

| Plan | Best For | Key Features | Deployment |

|---|---|---|---|

| Qrvey Pro | Fast, lightweight analytics | Embedded dashboards, reporting, automation, AI | Your cloud (AWS/Azure/GCP coming soon) |

| Qrvey Ultra | Full-stack, high-performance | Built-in data engine, transformation layer, advanced security, multi-cloud | Your cloud (AWS/Azure/GCP coming soon) |

Where Qrvey Shines

- SaaS-Focused Design: Qrvey is built from the ground up for multi-tenant SaaS analytics, making it a natural fit for software companies.

- Rapid Deployment: No-code/low-code tooling means your team can go from zero to a working embedded analytics layer in weeks, eliminating build cycles that span several months.

- Automation & AI: Advanced automation and GenAI features streamline analytics and drive smarter decision-making.

- Works Alongside Your Existing Stack: Qrvey integrates directly with Snowflake and Databricks so you don’t have to choose. Use your warehouse for data engineering and ETL; use Qrvey to deliver the customer-facing embedded analytics layer on top.

Where Qrvey Falls Short

- Not a General-Purpose Data Warehouse: Qrvey is not designed for heavy-duty data engineering or massive-scale ETL pipelines like Databricks or Snowflake.

- Limited Open-Source Integrations: While Qrvey integrates with many SaaS tools, it may not offer the same breadth of open-source connectors as Databricks.

Customer Reviews

“The flexibility and ease of use with Qrvey’s platform allows us to satisfy any use case that our customers ask for.” — David Anderson, CEO at EvenFlow.ai

“Qrvey is one of the only tools out there that gives us the ability to embed a full suite of analytics into web apps.”— Dara Kharabi, Product Lead at Farlinium

Who Qrvey Is Best For

- SaaS product leaders: Who need to ship embedded analytics without it consuming the roadmap

- SaaS engineering leaders: Who want to stop building and maintaining a custom analytics layer from scratch

- SaaS executives: Who want to monetize analytics as a product feature, not treat it as a cost center

Databricks vs Snowflake vs Qrvey: Closing Note

For a SaaS company, your customers don’t care about your infrastructure choices. They care about what they can see, explore, and act on inside your product. That’s the gap Qrvey fills and it’s one that Databricks and Snowflake weren’t designed to close.

The most practical framing: use Databricks or Snowflake for your data layer, and Qrvey for your customer-facing embedded analytics layer. They’re not mutually exclusive. For many SaaS companies, combining them is exactly the right answer. Qrvey reduces the Snowflake query load and eliminates the engineering cost of building an analytics layer from scratch, while your warehouse keeps doing what it does best.

Explore how Qrvey’s built-in data engine can consolidate your stack entirely and provide you with analytics that feel native to your product, without the build cost or the warehouse bill that comes with serving it at scale.

Natan brings over 20 years of experience helping product teams deliver high-performing embedded analytics experiences to their customers. Prior to Qrvey, he led the Client Technical Services and Support organizations at Logi Analytics, where he guided companies through complex analytics integrations. Today, Natan partners closely with Qrvey customers to evolve their analytics roadmaps, identifying enhancements that unlock new value and drive revenue growth.

Popular Posts

Why is Multi-Tenant Analytics So Hard?

BLOG

Creating performant, secure, and scalable multi-tenant analytics requires overcoming steep engineering challenges that stretch the limits of...

How We Define Embedded Analytics

BLOG

Embedded analytics comes in many forms, but at Qrvey we focus exclusively on embedded analytics for SaaS applications. Discover the differences here...

White Labeling Your Analytics for Success

BLOG

When using third party analytics software you want it to blend in seamlessly to your application. Learn more on how and why this is important for user experience.