⚡Key Takeaways

- Building a data warehouse in 2026 means using cloud-native architecture, ELT pipelines, and tenant-aware modeling so your SaaS product can safely serve thousands of customers without duplicating infrastructure

- Most teams fail when building a data warehouse from scratch because they design for storage, not application-level delivery, which creates scaling and security issues later

- The fastest way to build a data warehouse today is combining cloud warehouses, version-controlled transformations, and embedded delivery that queries warehouse data in real time

- For SaaS companies, a warehouse alone isn’t enough. You still need a secure analytics layer that handles multi-tenant isolation, row-level security, and self-service access per tenant

Most SaaS teams start with the same assumption: stand up Snowflake, point the dashboards at it, done. Then the query costs spike, tenant isolation gets patched together with row-level filters, and the engineering team is maintaining a data layer nobody fully understands.

Building a data warehouse that scales with a SaaS product requires a different set of decisions from the start around data models, tenancy architecture, and how analytics queries interact with your warehouse at volume.

This guide covers the full picture: what to build, what to buy, and what to decide before you write a single line of SQL.

Why Companies Are Building Data Warehouses

Companies build data warehouses because the alternative of patching together exports, APIs, and gut instinct eventually breaks in front of a customer.

Self-Service Access Reduces Churn

SaaS users hate feeling locked out of their own data. When you build data warehouse systems, you democratize access.

For example, EvenFlow AI used this approach to move away from manual Excel analysis, reducing operational inefficiencies by 30% without adding engineering headcount.

Query Performance That Scales

Operational databases (your Postgres, Microsoft SQL Server) are built for transactions, not analysis. Running a complex report across millions of rows on a transactional database is like asking a delivery truck to win a drag race.

Warehouses use distributed architecture and columnar storage so analytical queries run fast as data volumes grow.

Lower Cost for Analytical Workloads

Cloud-based warehouses like Amazon Redshift and Google BigQuery separated compute from storage, which changed the math entirely.

Organizations that migrated to cloud-based data models have saved as much as $2 billion over a five-year period by shifting from upfront capital expenses to flexible consumption. That saving comes from not having to overprovision hardware for peak loads you might see twice a year.

The Foundation for Machine Learning and Generative AI

More than for reporting, a warehouse is the substrate for every data analysis, machine learning model, and generative AI feature you’ll want to ship.

You can’t build a meaningful recommendation system without clean, centralized historical data. Building it right now means future AI investments don’t start from scratch.

Monetizing Your Data

When you create data warehouse structures that support multi-tenant analytics, you stop being a utility and start being a strategic partner. You can upsell premium tiers with real-time analytics or machine learning insights, turning a cost center into an ARR driver.

How Can SaaS Companies Monetize Embedded Analytics?

Core Components of a Modern Data Warehouse

Before you write a single line of pipeline code, it helps to know what you’re actually building. Here’s every layer a modern data warehouse needs, and what it does.

| Component | What It Does | Examples |

|---|---|---|

| Data Sources | Where raw data originates | CRMs, APIs, Excel spreadsheets, event logs |

| Ingestion / ETL Layer | Moves and prepares data | Fivetran, Airbyte, ETL Tools like dbt |

| Staging Area | Holds raw data before transformation | S3 buckets, Cloud Storage |

| Data Transformation Layer | Cleans, joins, reshapes data | dbt, Power Query, cloud-based transforms |

| Storage (Warehouse) | Stores structured, query-ready data | Amazon Redshift, Google BigQuery, Azure Synapse, Snowflake |

| Data Mart | Subset of warehouse for specific teams | Marketing mart, finance mart |

| Semantic / Analytics Layer | Maps data to business terms, controls access | Qrvey, Looker Studio, Power BI |

| Metadata Component | Tracks data lineage, ownership, definitions | Data catalogs, data observability tools |

Building a Data Warehouse: Step by Step

Building a data warehouse is a sequence of decisions that compound on each other, where getting step three wrong usually means redoing steps one and two. Follow this sequence to build one that doesn’t become a “data swamp”.

Step 1: Define Business Goals Before Touching Infrastructure

A trap most teams fall into is evaluating Amazon Redshift vs Google BigQuery before answering the most important question: What decisions will this warehouse help someone make?

One report found that 80% of organizations will fail at big data initiatives without well-defined objectives.

For SaaS teams, that failure looks familiar: a warehouse gets built, ETL jobs run every night, but adoption is low because neither the internal team nor the end users know how to use the data. Why? Nobody defined what “useful” looked like before the first pipeline fired.

Before writing a line of infrastructure code:

- List the top five reports the business currently makes manually (think Excel spreadsheets, email threads, SharePoint List exports)

- Identify who owns each data domain: Marketing campaign data, operational expenses, conversion rate reporting. No pipeline should go to production without a data owner

- Define latency requirements: does your team need real-time analytics or is a nightly batch load fine?

Step 2: Choose Your Architecture and Cloud Platform

The 2026 standard is the ELT model: Extract, Load, Transform. Move raw data into the warehouse first, then transform it using SQL-based tools like dbt. This keeps your data transformation logic version-controlled, testable, and visible to everyone.

| Platform | Best For | Watch Out For |

|---|---|---|

| Amazon Redshift | AWS-native teams, high-concurrency | Cluster sizing decisions get expensive fast |

| Google BigQuery | Serverless, pay-per-query flexibility | Unoptimized queries spike costs |

| Azure Synapse | Microsoft-stack organizations | More setup complexity vs. simpler alternatives |

| Snowflake | Broad ecosystem, data sharing | Per-query costs scale exponentially at SaaS concurrency levels |

| Microsoft Fabric | Teams already in the Microsoft ecosystem | Still maturing |

For SaaS companies, platform choice carries an extra consideration: if your warehouse powers customer-facing analytics, query volume from hundreds of concurrent tenant sessions looks nothing like internal reporting traffic.

Platforms priced on query compute can become very expensive, very fast.

🚩Red flag: Choosing a warehouse platform because your data team already knows it, without checking whether it handles multi-tenant query patterns from your SaaS app ends up a costly architecture mistake.

Step 3: Design Your Data Model

The Kimball dimensional model tends to be the top choice for SaaS. Organize your data into a central Fact Table (the “verb,” like a sale or login) surrounded by Dimension Tables (the “nouns,” like the customer or product).

To manage the data flow, map your logic to the Medallion Architecture:

- Bronze: Raw, untouched data extraction.

- Silver: Cleaned, de-duplicated, and joined.

- Gold: The final Star Schema, aggregated and ready for user consumption.

Step 4: Build Your Data Pipeline and ETL Processes

Data extraction is the silent killer of most data pipelines. Because you are pulling from legacy tools like MS Access or rate-limited APIs, your data extraction layer is naturally fragile.

The fix is a modern stack: Fivetran or Airbyte for ingestion, dbt for data transformation, and Airflow for orchestration.

To avoid the data corruption that 26% of businesses struggle with, you must make every pipeline idempotent. That is, use Merge statements instead of simple appends ensures that running a job twice doesn’t double your records, protecting your Data Quality from the start.

Step 5: Load Data and Validate Data Quality

Loading is a high-stakes transition where quality either holds or breaks. Don’t wait for a customer to spot a fact_sale table that dropped from 50k to 12k rows overnight:

- Automate your reconciliation checks against source totals immediately

- Use data observability tools like Monte Carlo or Elementary to monitor freshness and schema drift

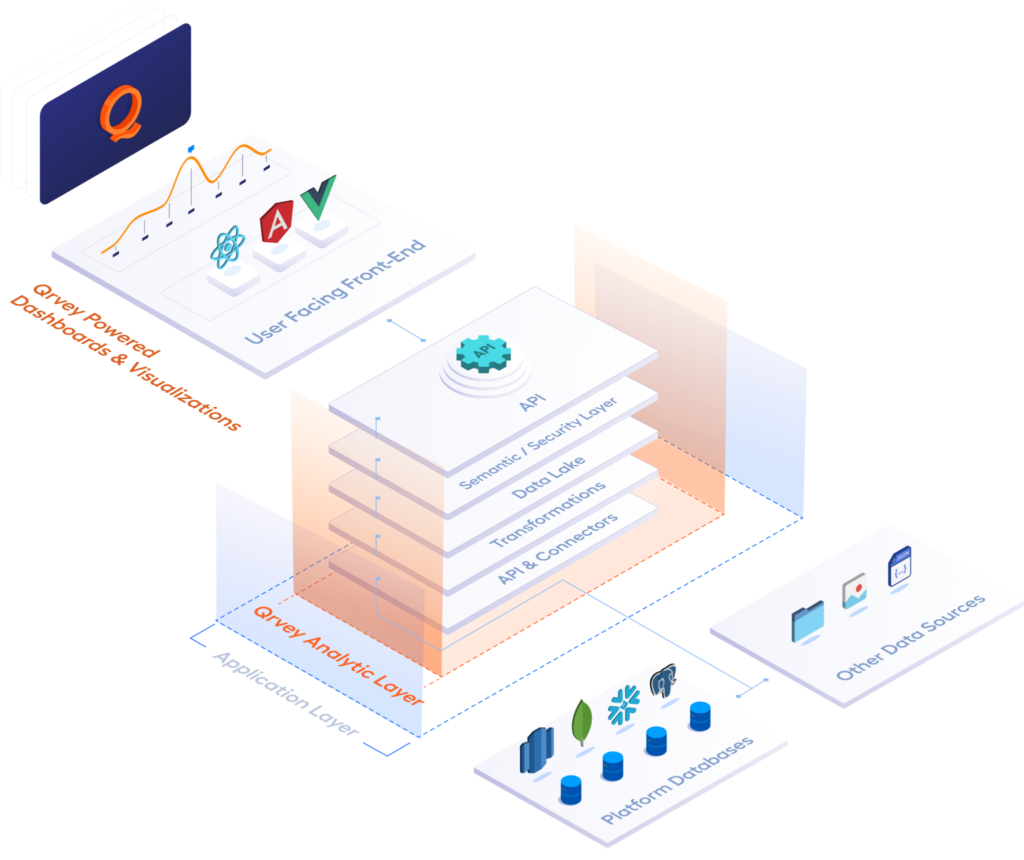

Step 6: Enable Data Consumption

Your warehouse is built, data is flowing. Now what?

You must choose your delivery model. Internal operations might thrive on Power BI report connections and DAX Measures, but a SaaS application with thousands of users requires a distributed architecture that can handle concurrent requests without crashing.

The Qrvey Advantage: Qrvey eliminates the “noisy neighbor” problem common in multi-tenant environments.

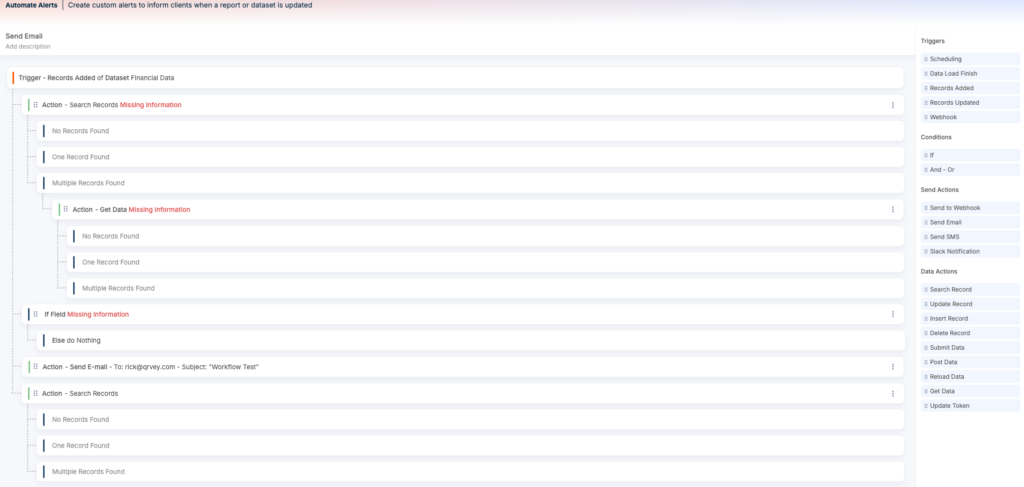

By deploying as a containerized solution within your own cloud, Qrvey scales automatically alongside your app, offering no-code workflow automation and embedded AI insights that turn your warehouse data into a competitive differentiator.

How Long Does It Take to Build a Data Warehouse?

Simple warehouses with two or three sources and internal reporting take four to eight weeks. While mid-scale setups with complex ETL processes can run three to six months.

For customer-facing multi-tenant analytics built entirely in-house (a product and data engineering project), expect 12 to 24 months. And that assumes you have the right data engineering and security expertise on staff.

| Scenario | Realistic Timeline |

|---|---|

| Simple warehouse, 2-3 sources, internal use | 4–8 weeks |

| Mid-complexity, 5-10 sources, dashboards | 3–6 months |

| Complex transformations, governance, compliance | 6–12 months |

| Customer-facing multi-tenant analytics in-house | 12–24 months |

So, to build or buy? Use Qrvey’s free ROI Calculator to quantify the actual cost difference.

Common Challenges When Building a Data Warehouse

Building a warehouse that holds up under hundreds of concurrent tenants, unpredictable query loads, and customer-facing security requirements is where most SaaS teams find out what they missed. Here are common walls teams hit

Multi-Tenant Data Security Doesn’t Come Free

Data warehouses aren’t multi-tenant by default. To connect one to your SaaS app, you’re forced to build a custom orchestration layer (middleware, metatables, and row-level security) that you likely didn’t budget for.

AWS and Snowflake both point to the Pool/Multi-Tenant Table model as the gold standard for scale, but the ongoing cost is the engineering hours spent maintaining that “middle” security layer indefinitely.

Qrvey eliminates this technical debt by deploying a pre-built, multi-tenant-aware data layer directly into your cloud, handling the plumbing of tenant isolation automatically.

Multi-Tenant Security in SaaS: Risk, Architecture & What to Evaluate

Warehouse Costs Spike Unexpectedly for SaaS Workloads

SaaS margins die in the gap between Snowflake’s fixed-tier scaling and your customers’ unpredictable query patterns.

Because Snowflake tiers double in cost with every step up, you’re forced to over-provision for peak traffic, paying for high-tier compute during the 20 hours a day your users are asleep.

→ Use our free Snowflake Savings Calculator

Schema Mistakes Are Expensive to Undo

Schema decisions are structural, not cosmetic. The dimensional model you build today dictates your data team’s velocity in year three. Star schemas built in a vacuum, without considering the SaaS product roadmap, are almost always rebuilt within 18 months.

Common mistake: Designing for your current database instead of the business questions. A data warehouse is a product; design it for your customers, not your source code.

Analytics Performance Degrades as Tenant Count Grows

In a customer-facing product, slow analytics is a revenue risk. Standard warehouses lack the native partitioning needed for 500+ tenants, leading to degraded experiences as you grow.

Modern data lakehouses like Apache Hudi provide raw speed but add massive technical debt. Understanding the structural differences in a Data Lake vs Data Warehouse for Embedded Analytics can help you decide which path offers the best balance of performance and security as your tenant count grows.

Why SaaS Platforms Need More Than a Data Warehouse

Relying solely on a data warehouse for customer insights often leads to two things: ballooning operational expenses and high churn.

When customers can’t find answers inside your product, they export data, breaking the “stickiness” of your platform. On the flip side, a dedicated embedding layer like Qrvey has the SaaS-native logic to:

- Provide embedded AI insights directly to non-technical users

See how Qrvey’s conversational AI powered by MCP works in this clickable demo

- Trigger No-code workflow automation based on tenant data

- Scale to unlimited users without per-seat licensing fees

Qrvey exists as the only platform that turns your data warehousing investment into a profit center by delivering a seamless, multi-tenant experience.

Data Lake vs Data Warehouse: What’s the Difference?

Building Multi-Tenant Analytics From Your Data Warehouse

The challenge for SaaS teams is getting the right data out to the right customer, securely, at the application layer.

With Qrvey, your SaaS queries Snowflake, Redshift, or BigQuery in real time, inheriting your existing security tokens at session start. You eliminate redundant data syncing and duplicate user management while ensuring Tenant A never sees Tenant B’s rows.

Take JobNimbus, a CRM for contractors. They were losing enterprise customers over inflexible reporting. After embedding Qrvey’s self-service dashboard builder, they hit 70% adoption among enterprise users within months, without rebuilding their data infrastructure.

Follow the lead of enterprise-ready brands delivering analytics to customers from their existing warehouse. Book a demo or watch one right away.

FAQs

A data warehouse is a centralized system used for data analysis and reporting. It pulls data from various sources into a single, high-performance environment.

You can implement a sandboxed “tenant lake” pattern. Qrvey enables tenants to connect their own SQL-compliant sources directly to the platform, governed by your central security policies and storage quotas to prevent one tenant from over-consuming resources.

In a modern DevOps environment, you should use scripted docker images. A full Qrvey deployment into your own Azure or AWS cloud account typically takes about 45 minutes, creating a fully functional, multi-tenant-ready analytics environment automatically.

To limit egress costs, use a hybrid approach. Qrvey supports “Live Connect” to query data in-place for real-time needs, while batching historical data into the warehouse every three to six hours to maintain freshness without ballooning your cloud bill.

David is the Chief Technology Officer at Qrvey, the leading provider of embedded analytics software for B2B SaaS companies. With extensive experience in software development and a passion for innovation, David plays a pivotal role in helping companies successfully transition from traditional reporting features to highly customizable analytics experiences that delight SaaS end-users.

Drawing from his deep technical expertise and industry insights, David leads Qrvey’s engineering team in developing cutting-edge analytics solutions that empower product teams to seamlessly integrate robust data visualizations and interactive dashboards into their applications. His commitment to staying ahead of the curve ensures that Qrvey’s platform continuously evolves to meet the ever-changing needs of the SaaS industry.

David shares his wealth of knowledge and best practices on topics related to embedded analytics, data visualization, and the technical considerations involved in building data-driven SaaS products.

Popular Posts

Why is Multi-Tenant Analytics So Hard?

BLOG

Creating performant, secure, and scalable multi-tenant analytics requires overcoming steep engineering challenges that stretch the limits of...

How We Define Embedded Analytics

BLOG

Embedded analytics comes in many forms, but at Qrvey we focus exclusively on embedded analytics for SaaS applications. Discover the differences here...

White Labeling Your Analytics for Success

BLOG

When using third party analytics software you want it to blend in seamlessly to your application. Learn more on how and why this is important for user experience.