⚡Key Takeaways

- The primary difference between a data lake and data warehouse is when structure is applied: data lakes use schema-on-read for flexibility, while data warehouses enforce schema-on-write for structured reporting

- For embedded analytics in multi-tenant SaaS, flexibility matters more than rigidity; data lakes handle evolving schemas, high-volume events, and diverse data types without constant pipeline rebuilds

- Data warehouses struggle with multi-tenant scale: row-level security, concurrency, and query costs grow exponentially as tenant usage increases

- Qrvey’s optimized multi-tenant data lake is purpose-built for embedded analytics, handling tenant isolation, performance, and cost control without requiring custom infrastructure

Picking the wrong data storage architecture shows up quietly in query costs that keep rising, pipelines that keep breaking, and dashboards that load just slow enough to make your customers stop trusting them.

The data lake vs data warehouse topic is really a question about what your data needs to do. Raw storage and fast analytical querying are different jobs, and they need different tools.

This guide walks through what each one actually is, where they overlap, and how high-growth SaaS teams decide which architecture (or which combination) fits their product’s real-world demands.

What is a Data Lake?

A data lake is a central storage for all kinds of data in its original, unstructured form.

Unlike traditional data warehouses, data lakes can ingest, store, and process structured, semi-structured, and unstructured data.

AWS describes the distinction plainly: a data warehouse holds preprocessed, structured data ready for querying, while a data lake stores raw data as-is. Structure gets applied later, when you actually need it.

What Is a Data Warehouse?

A data warehouse is a highly structured data management system designed specifically for data analytics and business reporting. Think of it as a meticulously organized library where every book (data point) has a specific place on a shelf before it is even allowed inside.

In a B2B SaaS context, your data warehouse acts as the single source of truth.

It takes data from your relational databases, cleans it, and transforms it into structured data that business analysts can query instantly. Because it enforces ACID transactions, warehouses ensure that the business insights you show your customers are always accurate and consistent.

Why Data Lakes Beat Data Warehouses for Embedded Analytics

A data lake and data warehouse aren’t just two ways to store data but two fundamentally different philosophies about when and how data gets structured.

| Factor | Data Lake | Data Warehouse |

|---|---|---|

| Schema approach | Schema on read | Schema on write |

| Data types | Structured, semi-structured, unstructured | Structured only |

| Primary users | Data scientists, data engineers, ML teams | Business analysts, Product teams |

| Storage cost | Low (object storage like AWS S3) | Higher (proprietary compute + storage) |

| Query performance | Slower without optimization | Fast, optimized for SQL |

| Multi-tenant support | Flexible; tenant isolation achievable with proper access controls | Requires significant custom engineering |

| Governance | Requires deliberate effort | Built-in by design |

| Best for | ML training, raw data exploration, multi-tenant SaaS analytics | Reporting, dashboards, structured BI |

1. Schema Approach: Flexibility vs. Discipline

This is the core divide. A data warehouse enforces a schema before data enters; every field has a type, every table has a structure. A data lake applies structure at query time (schema on read), meaning raw data goes in as-is and gets interpreted when someone actually needs it.

As Qrvey’s CTO David Abramson puts it: “Data lakes are built around a very flexible, less strict, less enforced sort of schema. It’s great for SaaS product and data environments that are tied to development resources.”

For agile SaaS teams adding new product features every sprint, that flexibility is often the only way to keep up.

When your warehouse schema has to be rebuilt every time engineering ships a new field, data engineers become the bottleneck between product development and customer-facing analytics.

2. Data Types Each Can Handle

Data warehouses work with structured data: clean, organized, relational.

Data lakes accept everything: structured data, semi-structured data (like JSON from your API), logs, clickstreams, IoT data, images, audio.

Most modern SaaS platforms generate data in formats beyond standard relational tables: webhook payloads, API responses, event streams, user interaction logs.

If your warehouse can’t ingest it without a transformation pipeline, that’s engineering overhead for every new data source your product adds.

3. Who Actually Queries Them

Data warehouses are built for business analysts and product teams who need clean, fast answers from structured data. Data lakes are the domain of data scientists and engineers running exploratory queries, training models, or processing raw event streams.

For embedded analytics specifically, this distinction flips the calculus.

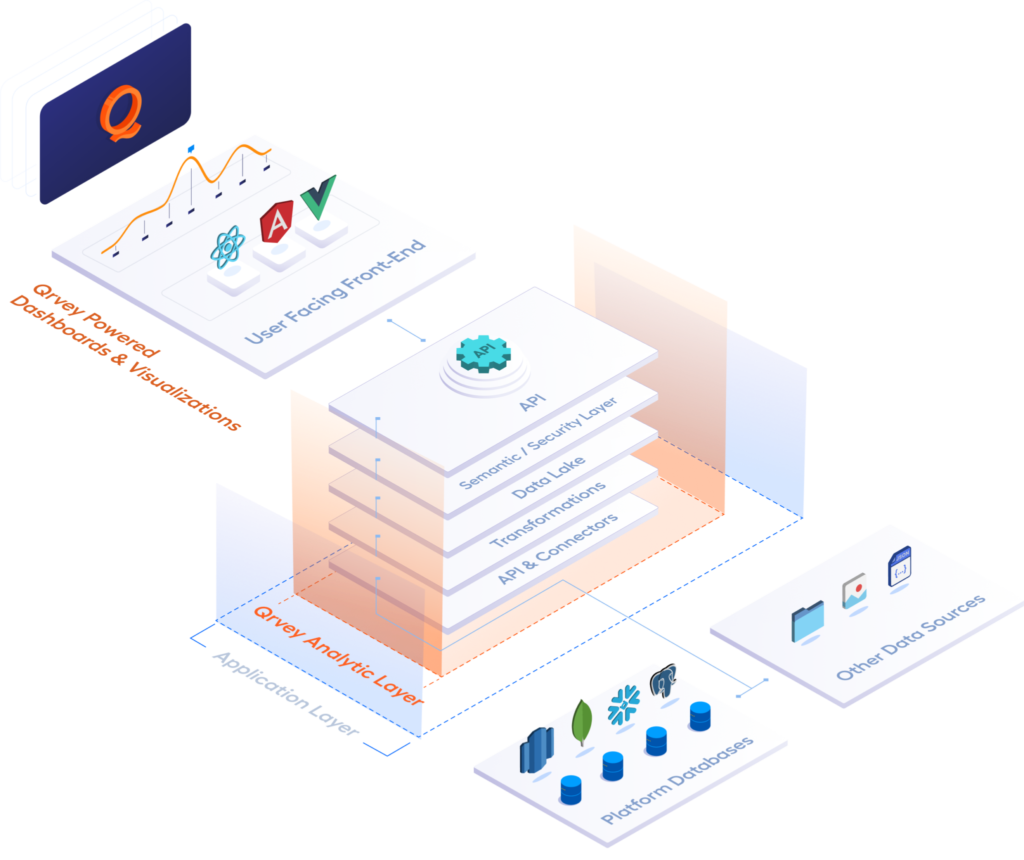

Your end users (the tenants inside your SaaS product) aren’t running raw SQL against a lake. They’re using dashboards, builders, and natural language interfaces. The lake sits underneath the analytics layer, handling ingestion and storage.

The embedded analytics platform like Qrvey handles what users actually see.

4. Storage and Compute Cost

Data lakes leverage cloud object storage like Amazon S3 or Azure Data Lake Storage, which are intentionally low-cost. If IDC’s prediction of a 175 Zettabyte DataSphere holds true, storing everything in a data warehouse would be a massive operational expense.

Teams that have tried powering multi-tenant embedded analytics directly off Snowflake know this pain: costs that looked manageable at 50 customers become unpredictable at 500. The warehouse is for your “high-value” clean data while the lake is for massive volumes of raw data.

5. Governance and Data Quality

Without a schema enforced at write time, a lake can quickly become a “data swamp”, full of duplicated, inconsistent, or undocumented data that nobody trusts. Adhering to solid data lake best practices alongside tools like AWS Lake Formation or Unity Catalog can help, but they require real investment

For multi-tenant SaaS, you must enforce row-level security manually. That means:

- Tenant ID filters

- Column-level permissions

- Token-based access flows

- Secure query routing

Without this, one tenant could see another’s data. That’s why governance complexity is higher in lakes.

Data warehouses have governance built in. The transformation required to get data into the warehouse is also what ensures it’s clean, consistent, and trustworthy when it comes out.

That’s a real advantage but it comes at the cost of flexibility and multi-tenant engineering overhead that warehouses weren’t designed to absorb.

Data Lake vs Data Warehouse Use Cases

The question isn’t really which one is better. Choosing the right home for your data depends on what you plan to do with it.

When a Data Lake Makes More Sense

Use a data lake when your SaaS application needs to:

- Ingest high-velocity or unpredictably formatted data: IoT telemetry, API payloads, clickstream events

- Train machine learning models which require massive volumes of raw, unformatted data

- Explore data without a predefined goal: data discovery before you know what questions you’ll ask

- Store large volumes for compliance or future use without paying warehouse compute prices for data you’re not actively querying

- Serve analytics to a large, growing tenant base where cost predictability and isolation at scale are non-negotiable

Example scenario: A B2B SaaS company building fleet management software receives telemetry data from thousands of connected vehicles in real time. That data arrives in raw JSON, at high volume, in unpredictable schemas.

A data lake on Amazon S3 is the obvious landing zone as it handles the volume cheaply, stores open formats without transformation, and feeds downstream ML models predicting maintenance needs.

When a Data Warehouse Makes More Sense

Use a data warehouse when:

- Executive Reporting: Your product management team needs to see a monthly churn report that must be 100% accurate.

- Financial Tracking: Any data that requires ACID Transactions and high Data Quality standards for business decisions

Example scenario: A SaaS company offering subscription billing software needs to produce accurate monthly revenue reports for its finance team and customers. The data is relational and well-defined e.g. subscription records, invoice amounts, payment statuses.

A warehouse like Google BigQuery or Amazon Redshift handles this well. Fast queries, trusted numbers, no guesswork about schema changes.

Do You Need a Data Lake or a Data Warehouse?

In 2026, the answer is clear: Your product needs a data lake, but your business might still need a warehouse.

For B2B SaaS companies, embedding analytics into a product that serves multiple tenants, here are the questions that clarify it:

- How much of your data is structured vs. unstructured?

- Do your end users need self-service access to the data, or is it only engineers running queries?

- Are you running operational analytics in real time, or scheduled batch reporting?

- How many tenants are you serving today, and where will that number be in 18 months?

- Do you have the engineering capacity to build and maintain custom multi-tenant security on top of a warehouse?

If you are only using a warehouse, your data engineers are likely wasting time cleaning data that will never be used.

If you’re running a lake without proper governance and a purpose-built analytics layer on top, your business users are probably treating it like an archaeology project every time they need a simple metric.

The architecture that works for embedded analytics at scale is a data lake that’s been purpose-built for multi-tenant query patterns, with a managed analytics layer sitting on top that business users can use.

Pros and Cons of a Data Lake

Pros

- Massive scalability at low cost: Stores petabytes on cloud object storage like AWS S3 without the compute overhead of a warehouse

- Handles any data type: Structured data, semi-structured data, unstructured data, logs, media, IoT data, all welcome

- Schema flexibility: Add columns on the fly without breaking existing pipelines, critical for fast-moving SaaS development teams

- Open formats: Formats like Parquet and Avro are portable; you’re not locked into a proprietary engine

Cons

- Governance complexity: Without a data catalog, data observability tooling, and strict Metadata management, the lake becomes a swamp fast

- Slow query performance without optimization: Running SQL queries directly against raw lake files is slow without layers like Apache Spark or a lakehouse optimization engine

- Not business-user friendly: A typical business analyst cannot self-serve from a raw lake; you need transformation and a semantic layer first

- Multi-tenant security is custom work: Tenant data isolation in a co-mingled lake doesn’t come built in; it requires deliberate engineering at the storage and query routing layer

Why data lakes get complicated in SaaS

Pros and Cons of a Data Warehouse

Pros

- Blazing Speed: Optimized for business reporting and SQL queries, providing sub-second responses for end-users

- Reliability: Enforces data strategy through strict schemas, ensuring that “Total Revenue” means the same thing to every user

- Security: Easier to manage data observability and user permissions when the data is already in a clean, relational data format

- ACID compliance: Transactional integrity is built in, which matters for financial and operational data

Cons

- Cost at scale for SaaS analytics: When thousands of tenants run concurrent queries, compute costs spike hard and fast; Snowflake’s pricing in particular can surprise teams who didn’t architect for query volume

- Rigidness: Every time your developers add a new feature to the app, your data engineers have to rebuild the ETL pipelines

- Not built for multi-tenancy: Most warehouses struggle with the multi-tenant analytics logic required to isolate thousands of customers without massive engineering overhead

When is a Data Lake Better for Embedded Analytics in a Multi-tenant SaaS Application?

There are a few ways in which a data lake is the best choice for embedded analytics in a multi-tenant SaaS app.

1. Multi-tenant data lakes simplify scaling applications

Consolidating storage, compute, and administration overhead into shared infrastructure significantly reduces costs for both providers and tenant subscribers as user bases grow.

However, resource clusters are important to size correctly. Concurrency demands are real within a SaaS tenant base.

Data lakes are also advantageous for tenant data isolation. With tenants accessing the same instance, strict access controls prevent visibility into other tenants’ data.

2. Handling diverse data formats

Data types are increasing. Product leaders of SaaS platforms want to offer better analytics, but their data warehouse is often holding them back.

Data lakes open up analytics options. When semi-structured data is in play, databases like MongoDB become easier to store in a data lake. With unstructured data options, you can even offer text analytics for customer service use cases.

3. Scalability for multiple tenants

Data warehouses don’t easily scale out for multi-tenancy without significant development effort.

To achieve multi-tenancy with a data warehouse, you must build additional infrastructure.

Logical processes exist between the database and the user-facing application that engineering teams have to build themselves.

4. Data isolation and security

Data warehouses struggle with row-level security in multi-tenant environments. Every data warehouse solution requires additional efforts to secure tenant-level separation of data. This challenge compounds with user-level access control.

5. Cost advantages

Data lakes scale out more easily and require less computation. Qrvey’s native multi-tenant data lake is built on Elasticsearch and optimized for the query patterns of embedded analytics at scale.

OpenSearch is a viable open-source alternative for teams managing their own stack.

Data streaming pioneer Confluent writes, “Data lakes are the most efficient in costs as it is stored in their raw form whereas data warehouses take up much more storage when processing and preparing the data to be stored for analysis.”

Challenges of Implementing a Data Lake

1. Skilled resources

Software engineers are not data engineers. You can read more about the differences here.

If you’re building yourself, you’ll need a data engineer to properly scale a data lake for multi-tenant analytics. Scaling software is different from scaling analytics queries.

Data engineering involves creating systems to gather, store, and analyze data, especially on a big scale. A data engineer helps organizations collect and manage data to gain useful insights.

They also convert data into formats for analytics and machine learning. Qrvey removes the need for data engineers which cuts both implementation costs and time to market.

2. Integration with existing systems

To analyze data from multiple sources, SaaS providers must build independent data pipelines.

Qrvey eliminates this as well for data collection.

SaaS companies using Qrvey don’t need the assistance of data engineers to build and launch analytics. Otherwise, teams end up building a separate data pipeline and ETL process for each source.

Qrvey addresses this challenge with a turnkey data lake management layer with a unified data pipeline that offers:

- A single API to ingest any data type

- Pre-built data connectors to common databases and data warehouses

- A transformation rules engine

- A data lake optimized for scale and security requirements that include multi-tenancy when required

Best Practices for Using a Data Lake Multi-Tenant Analytics

Defining a clear data strategy

Any organization that seeks to generate analytics must have a data strategy,

AWS defines as, “a long-term plan that defines the technology, processes, people, and rules required to manage an organization’s information assets.”

This is harder than it sounds. Most teams assume their data is cleaner than it is until they actually start querying it and find duplicates, nulls, and schema drift everywhere.

Data cleaning is the process of fixing data within a dataset.

The problems typically seen are incorrect, corrupted, incorrectly formatted, or incomplete data.

Duplicate data is a particular concern when combining multiple data sources. If mislabeling occurs, it’s particularly problematic. An even bigger problem with data in real-time.

Database scalability is another area where optimism is often unfounded.

DesignGurus.io writes, “Horizontally scaling SQL databases is a complex task riddled with technical hurdles.” Who wants that?

Implementing data security and governance

SaaS providers may grant permissions to users controlling access to certain features. Controlling access is necessary in order to charge additional fees for add-on modules.

When offering self-service analytics capability, your data strategy must include security controls.

For example, most SaaS applications use user tiers to offer different features. Tenant “admins” can see all data.

Conversely, lower tier users only get partial access. This difference means all charts and chart builders must respect these tiers. It’s also complex and challenging to maintain data security if your data leaves your cloud environment.

When analytics vendors require you to send your data to their cloud, it creates an unnecessary security risk.

By contrast, with a self-hosted solution like Qrvey, your data never leaves your cloud environment. Your analytics can run entirely inside your environment, inheriting your security policies already in place. This is optimal for SaaS applications. It makes your solution not only secure but easier and faster to install, develop, test, and deploy.

Make the Most of Your Data Architecture With Qrvey’s Embedded Analytics

Qrvey offers flexible data integration options to cater to various needs. It allows for both live connections to existing databases and ingesting data into its built-in data lake.

This cloud data lake approach optimizes performance and cost-efficiency for complex analytics queries. Additionally, the system automatically normalizes data during ingestion so it’s ready for multi-tenant analysis and reporting.

JobNimbus saw this play out directly. Facing churn from enterprise customers who found their legacy reporting too inflexible, they embedded Qrvey’s self-service dashboard builder into their CRM.

Within months, they achieved a 70% adoption rate among enterprise users and a measurable improvement in product-market fit score. That’s what happens when the analytics layer stops being an internal workaround and starts being a product feature customers actually use.

Book a demo with our team to experience Qrvey. Or if you’d rather evaluate first, use our free Embedded Analytics Evaluation Guide and ROI Calculator as a solid build-vs-buy comparison framework.

FAQs

A data warehouse is a centralized repository of structured, modeled data optimized for fast SQL queries and reporting. Common examples include Snowflake, Amazon Redshift, and Google BigQuery.

A data lake is a scalable repository that stores vast amounts of raw data in its native format (structured or unstructured) until needed for high-concurrency, multi-tenant SaaS analytics and processing.

Yes. Because they use cloud object storage, they allow you to store petabytes of raw data at a fraction of the cost of a relational database.

Extremely. You can store everything from social media logs to IoT devices data without needing to define a schema until the moment you’re ready to analyze it.

Generally, it is much cheaper for raw storage, but you may incur “compute” costs when using query tools to process that data for a dashboard.

Yes. Many SaaS companies use a data lake for high-volume, customer-facing embedded analytics and a data warehouse for internal, structured business reporting to balance performance, flexibility, and cost-efficiency.

The trend in 2026 is moving toward the data lakehouse, which combines the cheap storage of a lake with the metadata layer and performance of a warehouse.

David is the Chief Technology Officer at Qrvey, the leading provider of embedded analytics software for B2B SaaS companies. With extensive experience in software development and a passion for innovation, David plays a pivotal role in helping companies successfully transition from traditional reporting features to highly customizable analytics experiences that delight SaaS end-users.

Drawing from his deep technical expertise and industry insights, David leads Qrvey’s engineering team in developing cutting-edge analytics solutions that empower product teams to seamlessly integrate robust data visualizations and interactive dashboards into their applications. His commitment to staying ahead of the curve ensures that Qrvey’s platform continuously evolves to meet the ever-changing needs of the SaaS industry.

David shares his wealth of knowledge and best practices on topics related to embedded analytics, data visualization, and the technical considerations involved in building data-driven SaaS products.

Popular Posts

Why is Multi-Tenant Analytics So Hard?

BLOG

Creating performant, secure, and scalable multi-tenant analytics requires overcoming steep engineering challenges that stretch the limits of...

How We Define Embedded Analytics

BLOG

Embedded analytics comes in many forms, but at Qrvey we focus exclusively on embedded analytics for SaaS applications. Discover the differences here...

White Labeling Your Analytics for Success

BLOG

When using third party analytics software you want it to blend in seamlessly to your application. Learn more on how and why this is important for user experience.