⚡Key Takeaways

- Data lake management ensures your raw, semi-structured and unstructured data works for you instead of becoming a lifeless “data swamp.”

- A solid data lake architecture supports scalable storage (object storage, cloud) + compute (analytics engines, streaming) + many data sources like SaaS apps, IoT sensors and flat files.

- Best practices (governance, access control, data quality) and the right data lake tools help you turn massive Big Data volumes into business value.

“Where’s the customer revenue data from Q3?” Someone asks this in Slack. Three people respond with three different answers.

Your data lake has everything but finding the right thing is the nightmare.

Poor data lake management costs you more than storage bills. Trust, speed, and every decision gets delayed while teams argue over which dataset is correct. Companies with structured approaches cut query times from hours to seconds just by adding the right organizational layer.

You’ll learn the five architectural elements that transform messy storage into instant answers, plus real examples of teams who made the switch.

Architecture of Data Lake Management

Think of data lake architecture as accepting everything at the door but organizing it into rooms once inside. You need three layers working together, along with a firm grasp of foundational data lake best practices to ensure scalable operations.

Storage and Compute Layers

Storage accepts raw data in any format: CSV files, JSON logs, Parquet tables, images from IoT sensors. Srikanth Sopirala, AWS Solutions Architect, says proper data governance starts here by “organizing, transforming and linking the data to improve quality.“

The compute layer processes this information when needed. Cloud platforms like AWS S3 or Azure Data Lake Storage let you separate storage from processing, so you only pay for compute during active jobs rather than keeping expensive servers running constantly.

The governance layer makes everything discoverable. This means cataloging datasets and tracking data lineage so teams can actually find what they need.

Without governance, data leaders warn that “the value of that data is completely degraded” because nobody knows what exists or whether it’s trustworthy.

Data Organization Patterns

The bronze-silver-gold pattern organizes data management into stages.

- Bronze holds everything exactly as it arrives.

- Silver applies cleaning and standardization.

- Gold contains business-ready datasets that power customer dashboards and reports.

When JobNimbus integrated Qrvey’s embedded analytics, they gained this layered approach automatically. The platform unified data from multiple sources without heavy transformations while maintaining security across tenant boundaries.

The result was 70% user adoption of self-service reporting within months and dramatically reduced churn among enterprise customers who previously couldn’t get the flexible reporting they needed.

Common Data Sources

Your lake ingests from everywhere because customers generate data everywhere.

SaaS applications push user activity through APIs. Business apps export nightly snapshots. IoT devices stream telemetry continuously, generating terabytes from sensors monitoring equipment, vehicles, or environmental conditions across thousands of locations.

Differences Between Data Lake vs. Data Warehouse

Data warehouses demand structure upfront. You define tables, columns, and data types before loading anything. This works brilliantly for answering known business questions quickly but fails when you need to explore unstructured customer feedback or analyze clickstream patterns you hadn’t anticipated.

Data lakes flip this model. Store first, structure later. This flexibility matters when you’re ingesting sensor telemetry, social media posts, and application logs alongside traditional database exports.

For SaaS companies delivering analytics to customers, multi-tenant database architecture influences this decision significantly. You need both the flexibility to handle diverse customer data structures and the performance to serve dashboards quickly across thousands of tenants simultaneously.

That’s exactly why platforms like Qrvey handle multi-tenant data isolation automatically, so you focus on your core product instead of building security models from scratch.

Challenges in Managing Data Lakes

Building data lake is the easy part. Keeping it from becoming what one frustrated engineer calls “a dumping ground where nobody can find anything” is where most teams struggle.

Constantly Changing Schemas

When upstream systems evolve, everything downstream feels the pain. In manufacturing, inconsistent manual entries break ingestion pipelines. In healthcare, frequent updates to electronic records shuffle entire tables, forcing engineers to constantly rewrite logic.

The fix is automated schema detection and proactive alerts. Modern solutions like Delta Lake handle evolving structures natively, while clear data contracts with system owners prevent surprises.

Turning Into a Data Swamp

Without governance, a lake quickly turns murky. Michael Stonebraker, database pioneer, puts it bluntly: “Without clean data, or clean enough data, your data science is worthless.”

Teams lose track of datasets, quality degrades, and duplicated efforts multiply. The antidote is data cataloging: assigning ownership, tracking lineage, and scoring quality. Tools like Microsoft Purview and AWS Glue automate this, keeping your lake discoverable and dependable.

Security Across Tenant Boundaries

For SaaS teams, one accidental exposure between tenants can destroy trust overnight. Managing access at the file level doesn’t scale, which makes understanding security models critical when building customer-facing analytics.

Qrvey simplifies this with built-in tenant separation and inherited access controls, ensuring every customer’s data remains secure while your analytics stay fast, flexible, and compliant.

Why data lakes get complicated in SaaS

Best Practices for Data Lake Management

Rather than follow every rule in the book, manage your data lake by preventing these three core problems that hurt customers.

Implement Three-Pillar Governance

Sopirala’s framework provides a practical starting point:

- Curating data means running automated quality checks that flag missing values, duplicates, and suspicious outliers.

- To understand data, build searchable catalogs that every team member can query.

- Protecting data ensures “proper access, retention, secure compliance with regulations and management of the data lifecycle.”

These aren’t separate initiatives but interconnected practices. You can’t protect data you don’t understand, and you can’t make data useful for business decision makers without first curating quality.

Use Open Formats and Separate Compute from Storage

Data lakes thrive on flexibility. Storing data in open formats such as Apache Parquet, with Delta Lake or Iceberg for schema evolution, prevents vendor lock-in and keeps your architecture future-proof.

Separating storage and compute allows you to keep raw data in affordable object storage while only paying for compute during active workloads. This approach cuts costs and allows multiple teams query data simultaneously without performance bottlenecks. Qrvey’s architecture leverages this model to deliver enterprise-grade scalability for SaaS analytics.

Automate Monitoring and Lifecycle Management

Manual oversight can’t keep up with today’s data velocity. Automated monitoring helps detect anomalies (like ingestion spikes or schema changes) before they affect dashboards. Lifecycle policies should archive or delete old data automatically to stay compliant with privacy laws and reduce costs.

For SaaS teams delivering analytics to customers rather than internal business intelligence, Qrvey takes a different approach.

The platform includes a built-in multi-tenant data lake that automatically partitions everything by customer. When you connect data sources, tenant isolation happens automatically, no custom security code required.

Customers get embeddable dashboards they can customize themselves, eliminating endless feature requests for “just one more chart.”

See how to embed a dashboard with Qrvey in this clickable demo.

Tools & Technologies for Data Lake Management

The real standouts data lake management are those that master the balance between flexible storage, airtight governance, and cost-efficient performance.

AWS Lake Formation

AWS Lake Formation helps simplify building and governing a data lake on AWS. It works with data stored in Amazon S3, integrates with AWS Glue for ETL, and centralizes access control through a unified permissions model.

Its standout feature is centralized permissions: you define access once, and it is enforced across services like S3, Glue, Athena, and Redshift Spectrum. This reduces operational overhead and improves security consistency.

It also integrates with the AWS Glue Data Catalog for metadata management, while Glue crawlers handle schema detection.

Azure Synapse Analytics and Purview

Microsoft’s solution spans multiple services. Azure Synapse Analytics provides a unified environment for querying and processing data using SQL pools and Apache Spark, while integrating with Azure Data Lake Storage Gen2 for scalable storage.

Azure Purview handles data governance by scanning, classifying, and tracking data across systems.

For orchestration, Azure Data Factory (and Synapse pipelines) automate data movement and transformation workflows.

Qrvey’s Embedded Analytics Platform

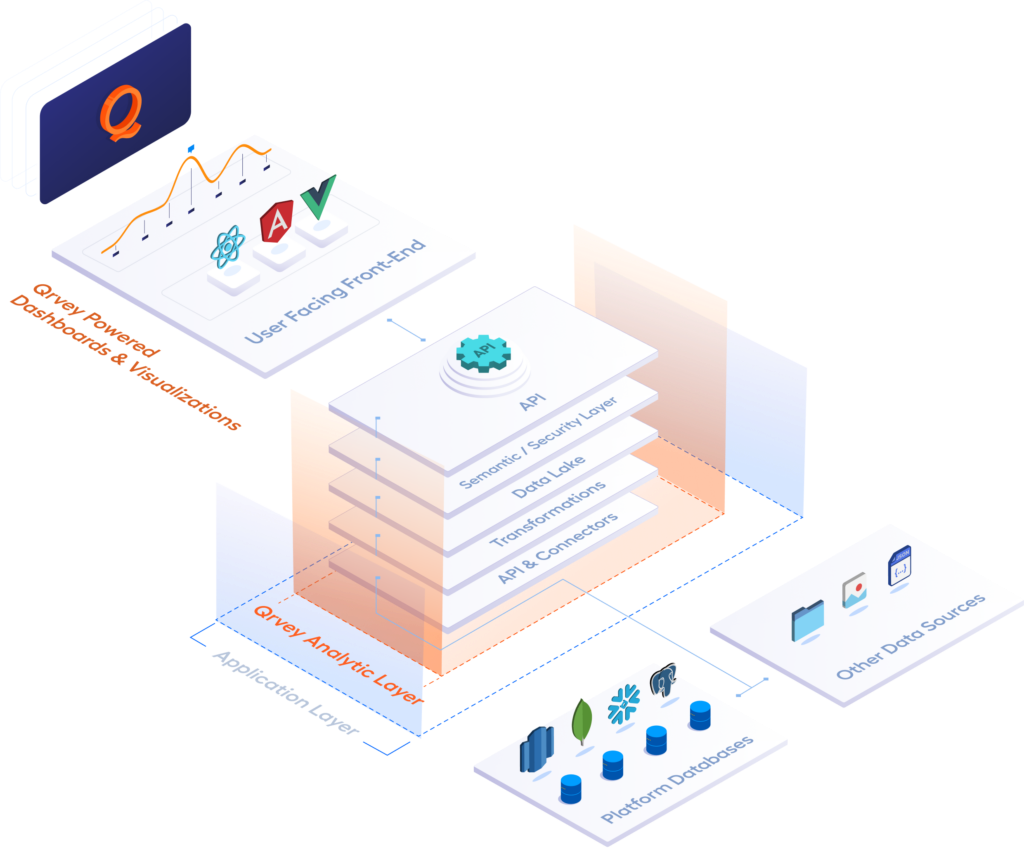

Qrvey takes data lake management a step further, built specifically for SaaS companies delivering analytics to their customers as part of their core product. It combines a multi-tenant data lake powered by Elasticsearch with built-in governance, automation, and embedded analytics.

Every customer’s data is automatically isolated, ensuring security without extra code. Its semantic layer keeps metrics consistent across all tenants, while users can personalize dashboards and reports.

Deployed directly in your cloud, Qrvey inherits your existing security and infrastructure.

Data Lake Management Use Cases

Here’s how companies apply best practices when customer retention depends on the analytics you deliver.

Unifying Patient Records

Healthcare organizations pull data from electronic health records, lab systems, imaging archives, and IoT monitoring devices.

Traditional warehouses struggle with this mix but lakes handle it naturally. Machine learning models then predict readmission risk across everything stored.

Fraud Detection

Banks process millions of transactions hourly, streaming them via Apache Kafka into lakes where Spark analyzes patterns in real-time. Each transaction gets scored against fraud models within milliseconds.

The lake stores everything (transaction history, clickstream data, device fingerprints) so analysts investigate suspicious activity immediately. Data governance creates audit trails regulators require while lineage tracking proves which model version flagged each case.

For platforms delivering these capabilities to customers, embedded analytics operations and workflow automation like the kind Qrvey provides transform how quickly you can ship new analytical features without proportionally increasing engineering headcount.

See how to build a workflow with Qrvey in this clickable demo.

The Future of Data Lakes & Key Innovations to Watch Out For

The data lake landscape keeps evolving as SaaS companies demand more from their analytics infrastructure. Here’s what’s reshaping how you’ll manage lakes:

- Data Lakehouse architectures blur the line between lakes and warehouses: Delta Lake, Apache Iceberg, and Apache Hudi add transaction support and query performance directly to lake storage, eliminating the need to copy data between systems for different workloads.

- Real-time streaming processing matures beyond batch jobs: Apache Flink and Spark Structured Streaming now process events with sub-second latency, making real-time dashboards practical for customer-facing analytics instead of just internal monitoring.

- AI-powered metadata management automates what teams manually catalog today: Expect automatic data classification, lineage tracking, and quality scoring to shift from nice-to-have features to regulatory requirements that platforms handle automatically.

For SaaS teams, this means delivering sophisticated analytics to customers without proportionally increasing engineering headcount; exactly why platforms like Qrvey handling multi-tenant governance and self-service capabilities help you compete with larger competitors’ features.

Turn Your Data Lake Into a Customer Retention Engine

You’ve built a data lake. Your customers need insights from it. Building analytics from scratch means months of development, managing multi-tenant security, handling schema evolution, and fielding endless feature requests.

Qrvey connects to your existing lake and handles tenant isolation automatically. Your customers get self-service dashboards they customize without submitting tickets. JobNimbus proved this model works, turning analytics from a churn driver into a competitive advantage within months.

Explore interactive demos to see how customer-facing analytics work in production SaaS applications, or learn how data analytics modernization transforms product roadmaps by offloading analytics expertise so your team can focus on core capabilities.

David is the Chief Technology Officer at Qrvey, the leading provider of embedded analytics software for B2B SaaS companies. With extensive experience in software development and a passion for innovation, David plays a pivotal role in helping companies successfully transition from traditional reporting features to highly customizable analytics experiences that delight SaaS end-users.

Drawing from his deep technical expertise and industry insights, David leads Qrvey’s engineering team in developing cutting-edge analytics solutions that empower product teams to seamlessly integrate robust data visualizations and interactive dashboards into their applications. His commitment to staying ahead of the curve ensures that Qrvey’s platform continuously evolves to meet the ever-changing needs of the SaaS industry.

David shares his wealth of knowledge and best practices on topics related to embedded analytics, data visualization, and the technical considerations involved in building data-driven SaaS products.

Popular Posts

Why is Multi-Tenant Analytics So Hard?

BLOG

Creating performant, secure, and scalable multi-tenant analytics requires overcoming steep engineering challenges that stretch the limits of...

How We Define Embedded Analytics

BLOG

Embedded analytics comes in many forms, but at Qrvey we focus exclusively on embedded analytics for SaaS applications. Discover the differences here...

White Labeling Your Analytics for Success

BLOG

When using third party analytics software you want it to blend in seamlessly to your application. Learn more on how and why this is important for user experience.