⚡Key Takeaways

- AI in SaaS has moved from competitive edge to baseline expectation, 92% of SaaS companies have launched or plan to launch AI features which means the question is no longer whether to add AI but whether your implementation is deep enough to matter

- The real competitive risk is letting AI agents bypass your UI and erode your end-user relationship entirely

- Buying an AI SaaS platform or embedding AI capabilities is consistently faster and cheaper than building in-house, which takes 18–36 months and carries high engineering risk

You can feel it in your pipeline: prospects asking about AI features before pricing, customers who used to be locked in are suddenly “evaluating alternatives.” AI in SaaS has moved past a product trend to become the line between winning and losing renewal conversations.

This guide covers exactly where the market stands in 2026: which use cases generate measurable retention & revenue impact, how to decide between buying and building AI capabilities, and the implementation traps that waste engineering cycles without moving the needle for customers.

The Role of AI in SaaS in 2026

Forrester called it early: in 2026, AI trades its “tiara for a hard hat.” The hype cycle is over and what’s left is the harder question: is AI load-bearing in your product or is it decorative?

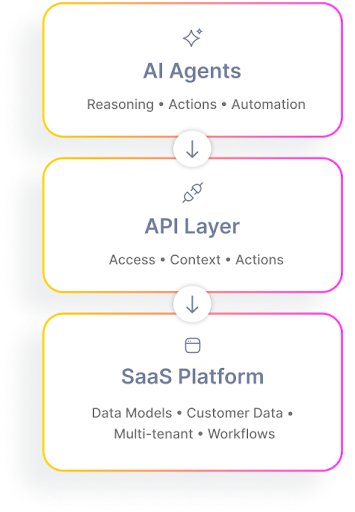

David Abramson, CTO at Qrvey, framed the threat clearly: SaaS platforms are “AI-Ready by Design” because they already hold the keys, i.e., structured data, APIs, and multi-tenant architecture. That’s the good news.

The risk is that those same ingredients make it trivially easy for external AI agents to sit on top of your product, delivering just enough value that user loyalty starts migrating away from your UI entirely. This makes proactive SaaS customer retention strategies more critical than ever to protect your core revenue.

SaaS teams avoiding that outcome are embedding AI deeply enough that their platform is the intelligence layer, not a backend that feeds one.

4 AI in SaaS Use Cases Worth Paying Attention To

If you’re deciding where to invest in AI in SaaS, start with the use cases already proving value across real SaaS products

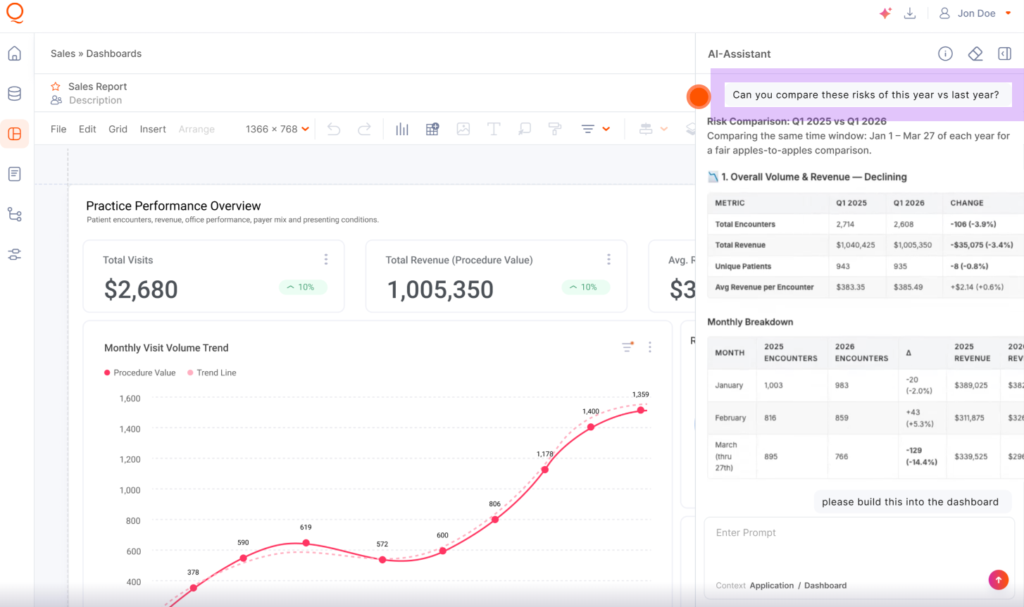

1. Embedded Agentic Analytics

Imagine your customers logging into your platform and instead of navigating dashboards, they just ask: “Show me which accounts are at risk of churning this quarter.” No SQL or filter stacks.

That’s what AI-powered embedded analytics looks like today, and it’s where AI creates the most measurable product value for multi-tenant SaaS companies specifically.

Qrvey’s AI Chart Builder is a good example that lets users describe a visualization in plain language, select a dataset, and have a chart placed directly on their dashboard.

When this is done well, the AI operates entirely within each tenant’s existing security model. Anomaly detection and follow-up suggestions don’t require reconfiguring permissions or exposing cross-tenant data.

For a product leader at a 300-person SaaS company, this collapses the gap between “we have a dashboard” and “our customers use it.” If your analytics adoption is low, the problem is usually the friction between the question a user has and the answer your UI forces them to hunt for.

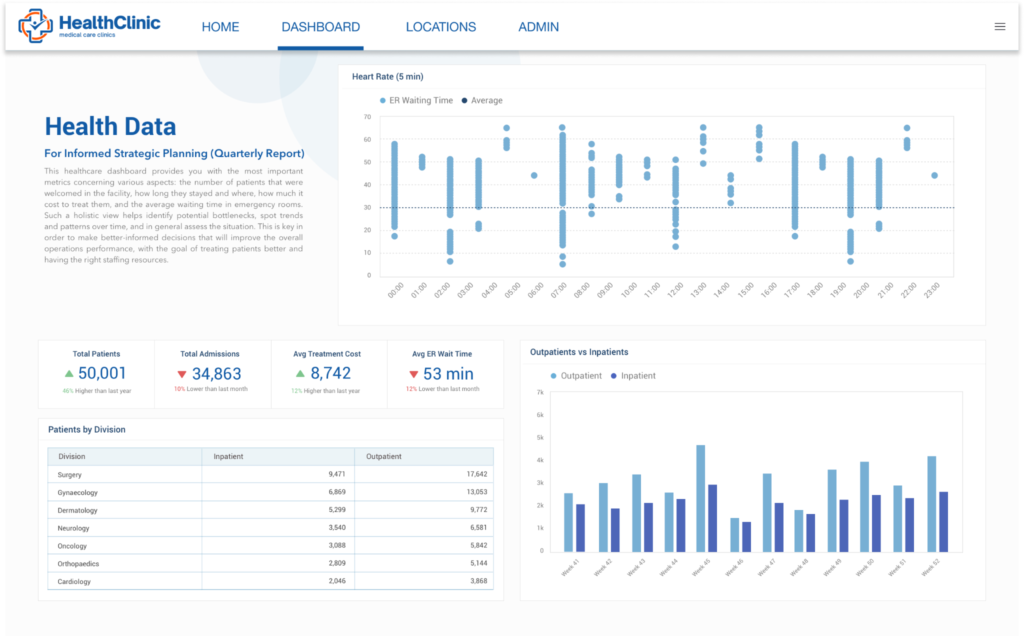

2. Predictive Patient Outcomes in Healthcare

In healthcare SaaS, AI is being used to analyze structured data from EHRs to predict readmission risks.

By embedding these insights, a clinical platform can alert staff to a high-risk patient before they are even discharged. This moves the needle from retrospective reporting to real-time clinical intervention.

3. AI-Powered Customer Support

Tools like Intercom’s Fin and Zendesk AI now resolve over 50% of inbound support queries without escalation, per their own benchmarks. Fewer tickets reaching a human means lower support costs and faster response times.

Those outcomes show up directly in CSAT scores and for SaaS companies with enterprise accounts, CSAT is a renewal lever.

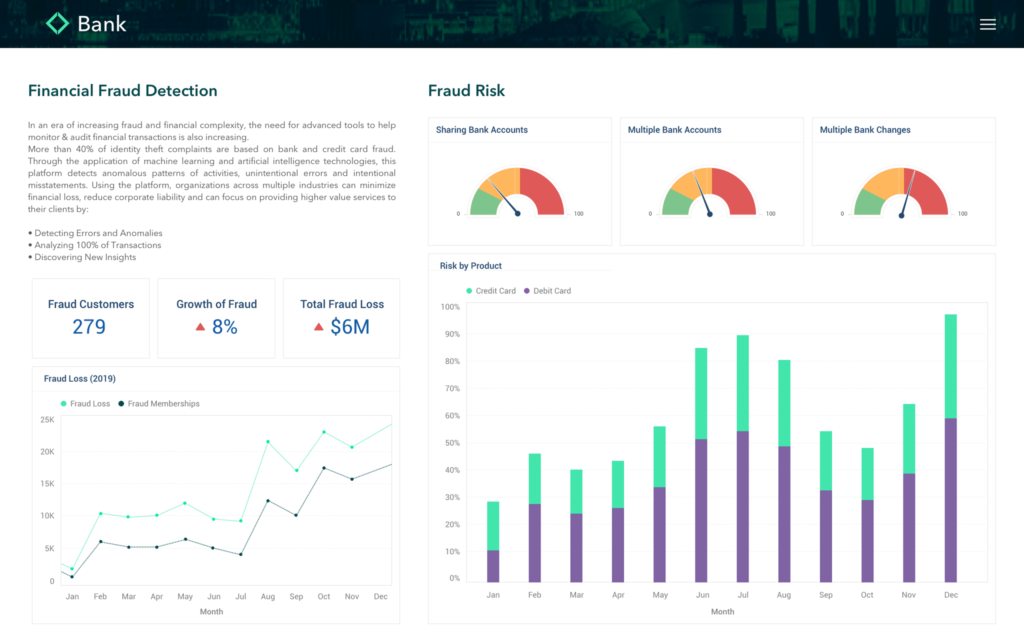

4. Autonomous Fraud Detection

FinTech platforms now use predictive models to monitor transaction streams.

Instead of waiting for a manual flag, autonomous AI agents can spot anomaly detection patterns (like a sudden spike in cross-border transfers) and trigger a webhook to freeze the account and notify the compliance officer via Slack.

Buy vs. Build AI SaaS Applications or Features

Every SaaS team hits the same question eventually: do you build AI features in-house or plug in something that’s already working?

Buy: Speed, Expertise, and Lower Total Cost

Building an AI-powered embedded analytics layer from scratch takes 18–36 months, before you factor in multi-tenant data isolation, security token flows, and query performance at scale across thousands of tenants.

JobNimbus, a CRM for roofing contractors, chose to embed Qrvey’s analytics rather than build in-house.

Within months, they saw 70% adoption among enterprise users and meaningfully reduced churn by giving non-technical contractors the ability to build custom reports themselves.

Qrvey’s flat-rate pricing also removes the growth tax of per-seat models by keeping costs predictable with unlimited users, tenants, and dashboards under one annual rate.

VIDEO: Use this decision framework to support the build vs buy discussion for SaaS analytics.

Build: Proprietary IP

There are cases where building makes sense, e.g. when the AI model itself is your “secret sauce”. For example, a unique algorithm for identifying rare diseases in medical imaging. Or control the full infrastructure.

But even then, you’ll likely buy the infrastructure (container orchestration, data lake architecture) to host that model. Building the entire stack, from the semantic layer to the UI, usually leads to the “escalating costs” that Gartner warns will cancel 40% of AI projects by 2027.

Generative AI in Embedded Analytics: Unlocking New Possibilities

Generative AI is unlocking new possibilities in embedded analytics. SaaS platforms now automate report generation, create dynamic self-service dashboards, and personalize insights for every user.

This technology accelerates decision-making, reduces manual effort, and empowers users to explore data in innovative ways.

Developer Productivity

Generative AI boosts developer productivity by automating code generation, testing, and documentation. SaaS teams can build features faster, reduce errors, and focus on strategic tasks. This leads to shorter release cycles and more robust applications.

Power User Content Generation

Power users benefit from AI-driven content generation, creating reports, presentations, and dashboards with minimal effort.

SaaS platforms leverage generative models to suggest templates, automate formatting, and ensure consistency—saving time and enhancing output quality

End User Conversational AI

Conversational AI transforms end-user experiences by enabling natural language interactions. Users can query data, request insights, and receive personalized recommendations through chat interfaces. This democratizes access to analytics and makes SaaS platforms more intuitive

See how conversational AI with MCP can work in your SaaS product in this clickable demo.

How AI Is Implemented in SaaS Today & Adoption Data

64% of SaaS companies embed AI as a supporting feature, while 36% have made it core to their product. That split tells you exactly where the market is heading. If AI is still a side feature in your roadmap, you’re competing against products where AI is the experience.

But implementation is the main differentiator.

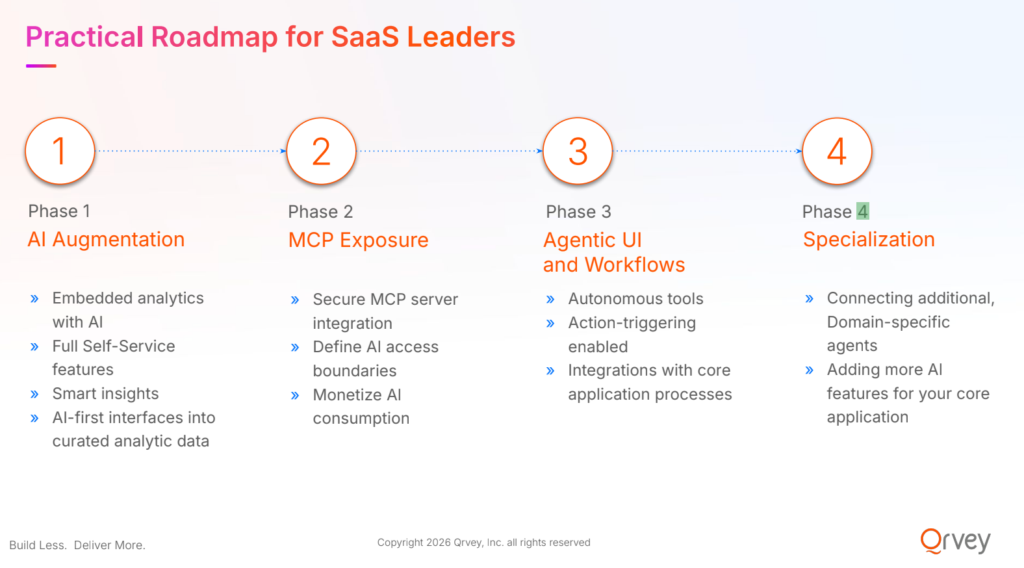

As David Abramson, Qrvey CTO, outlined at CPO Summit NYC 2026, the winning path follows a clear progression: augmentation → secure AI access → agentic workflows → domain-specific copilot.

The Augmentation Phase

Most SaaS teams start with augmentation: AI-generated insights, natural language queries, and smart recommendations layered on top of existing data. This is low risk and fast to ship as you’re not changing your architecture yet, just improving how users interact with it.

The Agentic Evolution

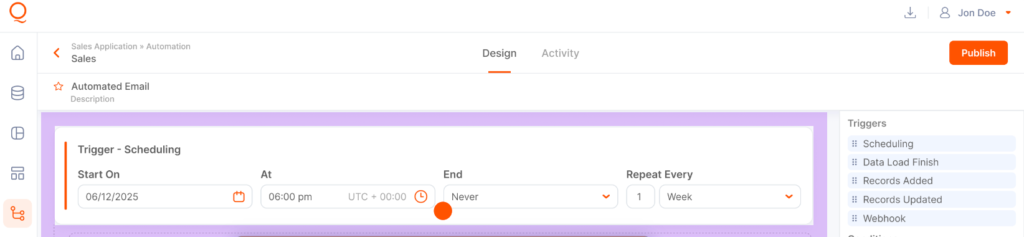

The next step is agentic behavior. AI that monitors data, triggers workflows, and orchestrates actions across systems.

This requires deeper integration with APIs, multi-tenant data layers, and security models, which is where platforms like Qrvey help teams move faster without rebuilding everything.

| Step | Traditional SaaS | AI-Enhanced SaaS |

|---|---|---|

| Insights | Static dashboards | AI-generated insights in real time |

| Interaction | Filters and clicks | Natural language queries |

| Actions | Manual workflows | Automated triggers and execution |

| Scaling | Dev-heavy updates | API-driven, AI-augmented systems |

Categorizing AI in SaaS

Before you add another AI feature to your roadmap, it helps to break down how AI in SaaS is structured and where each type actually fits.

By Capabilities

Generative AI (text, code, chart creation), predictive AI (churn and forecasting), and conversational AI (natural language interfaces) are the three primary capability categories.

Qrvey’s AI Chart Builder is a prime example of Generative AI, allowing users to turn a plain-language prompt into a fully functional, filterable chart inside the product, not a static output.

By Functional Areas

Analytics, automation, content generation, and developer tooling are where SaaS AI tools deliver the most measurable value right now.

By Learning Techniques

Most SaaS platforms use a mix of large language models for UI interactions and specialized deep learning or reinforcement learning for domain-specific tasks like credit scoring or churn prediction.

By Application Areas

Customer-facing features (in-product analytics, AI assistants) vs. internal process acceleration (QA automation, documentation) carry different governance requirements and different risk profiles; don’t treat them the same.

By Algorithms and Approaches

The practical distinction for product teams: does the AI surface insights or take autonomous action? The latter requires tighter controls and tenant-level audit trails.

For example, Qrvey’s workflow builder lets teams define triggers and actions, moving from “insight” to “execution” without writing backend logic.

By Deployment Models

Cloud-hosted AI APIs (OpenAI, Anthropic, Gemini) vs. self-hosted models vs. platform-embedded AI each carry different data privacy and cost implications.

For multi-tenant SaaS, platform-embedded or self-hosted options give you tenant-level data control that shared API calls can’t guarantee by default.

3 Key Benefits of Adopting AI in Your SaaS

The benefits of AI in SaaS only show up when it improves how your product thinks, responds, and acts. Here are some real, measurable impacts.

Your Analytics Layer Becomes a Retention Moat

When each of your tenants can query their data in plain language and receive proactive anomaly alerts, your product becomes harder to replace. Customers who hit a wall and reach for a spreadsheet are a churn risk wearing a subscription.

Lower Cloud Infrastructure Costs

AI-optimized data engines and native multi-tenant data lake architectures reduce the volume of queries hitting Snowflake directly.

EvenFlow AI, an automotive scheduling SaaS, embedded Qrvey and reduced operational capacity inefficiencies by up to 30%, automating parts management workflows that previously required manual analysis and engineering time.

New Revenue Models Become Viable

AI-native SaaS enables pricing models that weren’t viable before: per-AI-query billing, premium AI tiers, and MCP access fees for partners who want structured data access.

As Abramson put it: “AI capabilities transform cost centers into profit centers.”

For SaaS executives focused on NDR and expansion revenue, that framing is worth sitting with.

Challenges of Implementing AI in SaaS Applications

The hard part of AI in SaaS is making sure it’s secure, affordable, and actually useful across thousands of customers

Privacy and Security

Cisco reports 64% of teams worry about sensitive data exposure, yet nearly half still input private data into AI tools. For SaaS products, that risk multiplies across tenants.

Tenant-level data isolation, security token authentication that inherits your existing access model, and audit trails for every AI-generated output need to be in your design from day one, not retrofitted after an incident.

Cost and Power Consumption

Dan Herbatschek’s 2026 analysis found companies underestimating total AI costs by 30% or more. Inference costs, storage, model fine-tuning, and in-production maintenance compound faster than most roadmap planning cycles account for.

Making Business Sense

The AI features that survive budget scrutiny are tied to measurable outcomes: reduced churn, higher adoption, faster customer time-to-value. If a roadmap item can’t be connected to a specific product metric or revenue outcome, it belongs in the backlog.

One practical test before you prioritize an AI feature: can you describe the moment in your customer’s workflow where this creates a noticeably better outcome than what they have today?

Bringing AI-Supported Embedded Analytics Into Your Product with Qrvey

The fastest path to bringing AI into your SaaS product is integrating proven systems that already handle multi-tenant data, embedded analytics, and AI-driven workflows.

Qrvey lets SaaS teams do exactly that: embed AI-powered analytics directly into their product, deploy in their own cloud, and give users real-time insights without building the infrastructure from scratch.

If you’re exploring vendors, our Embedded Analytics Evaluation Guide is a good place to map real capabilities against your requirements. When you’re ready to see it in action, book a demo, and we’ll walk you through agentic analytics running inside a product like yours.

Arman Eshraghi is the CEO and founder of Qrvey, the leading embedded analytics solution for SaaS companies. With over 25 years of experience in data analytics and software development, Arman has a deep passion for empowering businesses to unlock the full potential of their data.

His extensive expertise in data architecture, machine learning, and cloud computing has been instrumental in shaping Qrvey’s innovative approach to embedded analytics. As the driving force behind Qrvey, Arman is committed to revolutionizing the way SaaS companies deliver data-driven experiences to their customers. With a keen understanding of the unique challenges faced by SaaS businesses, he has led the development of a platform that seamlessly integrates advanced analytics capabilities into software applications, enabling companies to provide valuable insights and drive growth.

Popular Posts

Why is Multi-Tenant Analytics So Hard?

BLOG

Creating performant, secure, and scalable multi-tenant analytics requires overcoming steep engineering challenges that stretch the limits of...

How We Define Embedded Analytics

BLOG

Embedded analytics comes in many forms, but at Qrvey we focus exclusively on embedded analytics for SaaS applications. Discover the differences here...

White Labeling Your Analytics for Success

BLOG

When using third party analytics software you want it to blend in seamlessly to your application. Learn more on how and why this is important for user experience.